"The greatest shortcoming of the human race is our inability to understand the exponential function" (Bartlett, 2005^).

After hand tools, language, and then writing, the digital age could be considered the fourth of the ages of thinking technologies. The current digital era is occuring against a backdrop of longstanding and increasingly critical problems, problems which include such exemplars as the population explosion, human health and wealth and global climate change. Of the many digital trends that are well defined in this fourth generation of thought, only one is somewhat well known, Moore's Law. Twenty-first century awareness of many digital trends and the complex thinking and mix of tools on which they are based is essential to more effectively predict and plan for educational needs. This includes the trends of: doubling computer capacity; expanding computer communication systems; increasing pace of change; burgeoning rates of social interaction; an explosive expansion of data and the growing role of unpredictability. These factors in turn drive discussion about the capacity and need for education, for human beings to change, learn and grow. In short, the topic of change permeates world culture. Increasingly the topic is that of the surprising developments of exponential change for which much of the human race has demonstrated a rather singular inability to comprehend (Bartlett, 2005).

The examples below indicate that exponential change is becoming the new normal for ever more factors in our cultural and economic systems.

The exponential changes of the 21st century that are being addressed by human culture reflect a reinforcing interplay between genetic, social, cultural and technical capacity. An exponential curve is a useful beginning point in understanding the even more complex implications of nonlinear system behavior. The Interactive Mathematics site uses the exponential nature of stock market data to provide some useful approaches for learning more.

Interacting with exponential change in technology are exponential changes in population, human health and climate that are the direct result of centuries of advancing technology used by social systems to solve the problems at hand. Our problems in turn impact how the brain functions and evolves (Mithenal & Parsons, 2008). Three trends are highly global in their impact and provide an important parallel to digital technology development: population size; economic and health conditions; and percentage of carbon dioxide.

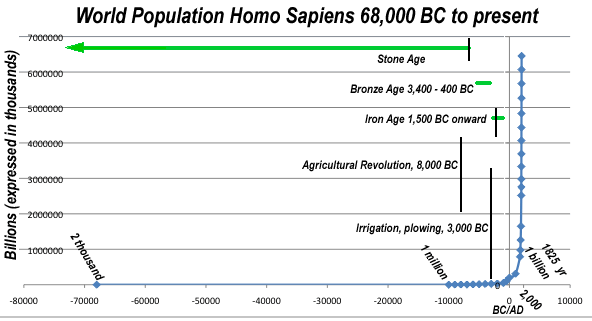

The most obvious and well known social trend is the population curve. The world population graph on the right shows the famous J curve of the population explosion data (Kremer, 1993^; World Population, 2008^).

The most obvious and well known social trend is the population curve. The world population graph on the right shows the famous J curve of the population explosion data (Kremer, 1993^; World Population, 2008^).

However, the growing number of people in the last 200 years of this graph obscures the equally significant 500-fold growth in human population from 70,000 BC to 10,000 BC, from perhaps 2,000 to 1 million people, hence the labels about those details on the bottom of the graph. The population numbers before the year 1930 have to be given considerable latitude as they are based on research and informed conjecture, not a detailed census. For example, the "two thousand" population figure near 70,000 BC that raises the close call of the extinction of our species is based on recent genetic research (Behar, 2008^; Garrigan & Hammer, 2006^; Schmid, 2008^). The 1 million figure for the year 10,000 BC is the lower estimate of a range between 1 and 10 million drawn from multiple studies (U.S. Census Bureau, 2008^; World population estimates, 2008^). The graph also obscures the demographic transition (van der Pluijm, 2006^; Galor & Weil, 2000^) happening in the industrialized countries where population growth has leveled or is in slight decline. Given the 6,000-fold increase in population from 10,000 B.C. to the present, such a demographic transition holds some hope for all countries to control population growth as their economic and health conditions improve in the decades ahead.

An even more obscured element buried with the population curve is the acceleration of human biological and genetic evolution made recently visible by explosive growth in knowledge of molecular biology and DNA studies. Hawks et al. (2007^) point to genetic data suggesting acceleration over the last 40,000 years, while Cochran & Harpendin (2009^) cite further data noting additional acceleration in the last 10,000 years due to many factors including intense population growth. Dunbar and Schultz (2007^) report that "...the balance of evidence now clearly favors the suggestion that it was the computational demands of living in large, complex societies that selected for large brains". Portin's review of the literature (2007^, 2008^) on molecular genetics and the evolution of man found further support for the view of continuing human evolution over the last tens of thousands of years, concluding "that genetic and cultural evolution have gone hand in hand during the recent, and still continuing, evolution of the mankind interacting with each others in a bidirectional fashion" (Portin, 2008^).

It is also important to understand how small the population gatherings were that drove brain growth. Dunbar and Schultz highlight the importance of "living in large complex societies" (2007^), but in early human history these societies were tiny in comparison with today. During the upper paleolithic period (from 40,000 to 10,000 B.C. ) the size of the largest European society putting pressure on the brain's capacity for language, art and tool development was the Chippewyan ethnic group (Bocquet-Appel et al., 2005^). This group totaled around 2,800 people in gatherings of a few dozen to a few hundred scattered across 40 square miles (100 square kilometers), so the computational pressure on the brain in prior millennia would have been significantly smaller.

An inference that can now be drawn is that the intensity of interactions among groups of 24-30 children and adults, the size of many school classrooms, were of sufficient size to have contributed to the growth of human intellectual capacity. As troops of chattering monkeys are much larger than this, mere social interaction must be insufficient to drive intellectual development. It is the hominids unique capacity to use their hands for tool development that must have also contributed to the impact of population size as a critical factor in human intellectual evolution. The array and impact of the growing complexity of digital tools in the 21st century will be addressed shortly.

The current pressure for evolutionary growth in human intellectual capacity in the 21st century is driven by hundreds of cities in the millions of people and instant anywhere communication with potentially billions of people. There is no measureable way to make this comparison of mental computation demands other than recognize that it is many times higher today. Clearly, synchronizing the growing demands and needs of over 7 billion people will require ever greater computational capacity of both people and supporting digital communication systems.

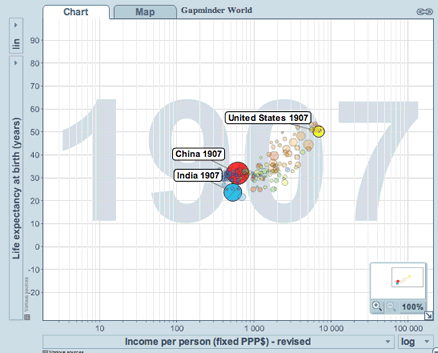

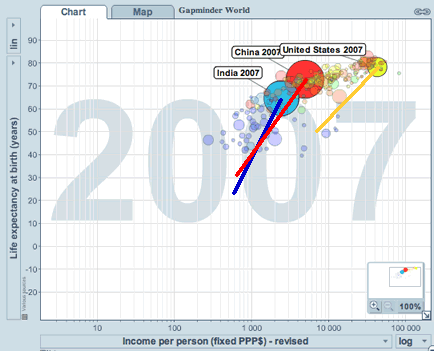

The rapid growth and evolution of population has been accompanied by generally steady improvement in economic and health conditions with accelerated improvement in the last 100 years. In these two graphs generated at gapminder.org, the bubbles are countries with the size of the bubble representing the population of individual countries. Clicking on the graphics will provide a larger sized view of the charts. The first graph shows results from the year 1907 and the second from 2007. The bubbles indicate the relative population size of the country. (At this Gapminder page it is easy to select countries for comparison on the right of the chart, then slowly slide the "year controller" at the bottom to see change over time in health and wealth.)

The rapid growth and evolution of population has been accompanied by generally steady improvement in economic and health conditions with accelerated improvement in the last 100 years. In these two graphs generated at gapminder.org, the bubbles are countries with the size of the bubble representing the population of individual countries. Clicking on the graphics will provide a larger sized view of the charts. The first graph shows results from the year 1907 and the second from 2007. The bubbles indicate the relative population size of the country. (At this Gapminder page it is easy to select countries for comparison on the right of the chart, then slowly slide the "year controller" at the bottom to see change over time in health and wealth.)

In these two graphs on the left, India, China and the United States, have been highlighted from the some 200 possible country bubbles. The slant of their straight lines quickly shows the degree of improvement over the one hundred years since their 1907 data points, though in fact their growth was anything but straight. Life expectancy at birth in years shown as the vertical line is a measurement of a country's physical health. The horizontal line uses Income Per Person as a measure of a country's economic health. Note that as the population of these three countries has grown significantly over the last 100 years, the movement of the bubbles of most of the countries of the world has also been towards better economic and human health which in turn requires more schools and educators to advance even further. Among many factors influencing these trends, the education of women has done the most to improve a country's economic and health conditions and the Internet is now the cheapest way to to deliver knowledge to the greatest number. Can such improvement be sustained and continue?

In these two graphs on the left, India, China and the United States, have been highlighted from the some 200 possible country bubbles. The slant of their straight lines quickly shows the degree of improvement over the one hundred years since their 1907 data points, though in fact their growth was anything but straight. Life expectancy at birth in years shown as the vertical line is a measurement of a country's physical health. The horizontal line uses Income Per Person as a measure of a country's economic health. Note that as the population of these three countries has grown significantly over the last 100 years, the movement of the bubbles of most of the countries of the world has also been towards better economic and human health which in turn requires more schools and educators to advance even further. Among many factors influencing these trends, the education of women has done the most to improve a country's economic and health conditions and the Internet is now the cheapest way to to deliver knowledge to the greatest number. Can such improvement be sustained and continue?

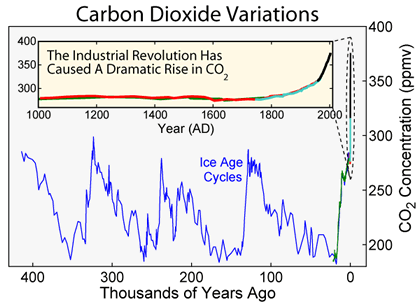

The graph on the left from Wikipedia on carbon dioxide in the Earth's atmosphere shows the CO2 cycle over the last 400,000 years and its climbing beyond its historic ranges in the last 100 years as health and economic conditions improved around the globe. Clicking on the graphic will provide a larger sized view of the chart. A second graph from the Union of Concerned Scientists (not shown here) displays similar CO2 emissions shooting beyond historical norms but includes data going back the last 650,000 years. No country has achieved its economic and health improvements without harmful pollution that in the long term can put an end to or reverse such positive increases in human welfare. One example is the by-product of excessive CO2 emissions that damage the climate through global warming. One possible conclusion is that different and quickly implemented changes are needed in the power technologies that undergird the energy systems that drive world culture. A wide range of carbon calculators and more are available for calculating an individual's climate impact.

The graph on the left from Wikipedia on carbon dioxide in the Earth's atmosphere shows the CO2 cycle over the last 400,000 years and its climbing beyond its historic ranges in the last 100 years as health and economic conditions improved around the globe. Clicking on the graphic will provide a larger sized view of the chart. A second graph from the Union of Concerned Scientists (not shown here) displays similar CO2 emissions shooting beyond historical norms but includes data going back the last 650,000 years. No country has achieved its economic and health improvements without harmful pollution that in the long term can put an end to or reverse such positive increases in human welfare. One example is the by-product of excessive CO2 emissions that damage the climate through global warming. One possible conclusion is that different and quickly implemented changes are needed in the power technologies that undergird the energy systems that drive world culture. A wide range of carbon calculators and more are available for calculating an individual's climate impact.

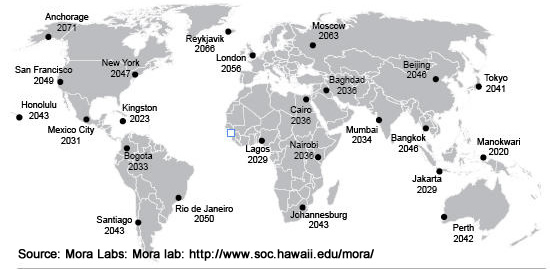

This exponential change in turn has real consequences for where each of us live. The map of sites and dates on the right from Mora Labs shows the approximate date at which the coldest temperature going forward will be warmer than the hottest year ever recorded at that site over the last 150 years of reliable scientific measurement (Mora et al, 2013^). In a New York Times interview Dr. Mora said: “Go back in your life to think about the hottest, most traumatic event you have experienced. What we’re saying is that very soon, that event is going to become the norm” (Gillis, 2013^). How can you search for the hottest day ever recorded in your town? Based on the dates of this map, when do you estimate Mora's prediction would happen to your location?

This exponential change in turn has real consequences for where each of us live. The map of sites and dates on the right from Mora Labs shows the approximate date at which the coldest temperature going forward will be warmer than the hottest year ever recorded at that site over the last 150 years of reliable scientific measurement (Mora et al, 2013^). In a New York Times interview Dr. Mora said: “Go back in your life to think about the hottest, most traumatic event you have experienced. What we’re saying is that very soon, that event is going to become the norm” (Gillis, 2013^). How can you search for the hottest day ever recorded in your town? Based on the dates of this map, when do you estimate Mora's prediction would happen to your location?

The climate challenge is further complicated in that the digital knowledge systems needed to continue to address all three of these issues are one of the major consumers of electrical power, both in the power to run the computer systems and then to cool them.

These three background trends together with the digital technology trends about to be discussed all contribute to and multiply the increasing pace of change in 21st century culture. What are the trends then in the digital tools that are being used to leverage these background trends in population, health and wealth and climate to solve a wide range of current problems?

"We are living in exponential times." (Fisch, McLeod, & Bronman, 2005)

The entire era of digital tools would represent the tinest of dots on the extreme right hand edge of the background trend graphs above. Instead of millions of years, thousands of generations or centuries of time, these digital trends have taken shape in less than a single human lifetime, with significant developments occurring in the last ten years and even the last year. Digital tools have only been available for the last 60 or so years of human history, emerging duringWorld War II in the 1940's. The commercial global Internet system with its World Wide Web applications began in 1993-1994, ushering in the era of the digital palette and its transformation of the nature of literacy. Consequently these digital trends are very recent with a future long term impact that is just beginning to be understood. What is clear that many of the increasingly greater changes to date have been about changes in communication systems. That in turn has set in motion radical changes in global energy systems as well as in the creation and transportation of goods, changes that are only at the beginning of their exponential curves. These latter developments are not discussed further but are capably addressed by Rifkins in his book, The Zero Marginal Cost Society (2014^).

Can humankind build on a series of digital trends to creatively address the inherent problems in the aforementioned backdrop trends as well as other problem areas? Such capacity may be far more critical to thriving in the decades ahead than we can imagine.

The Trend of Doubling Computer Chip Capacity (N * 2)

The Trend of Doubling Computer Chip Capacity (N * 2) The modern computer has been a rapidly evolving sandwich of miniaturized electronic circuits etched into computer chips cut from silicon wafers which includes all of the major parts of a computer: the CPU (central processing unit chip); memory (RAM/ROM chips); and data storage (increasingly Flash chips instead of spinning hard drives). The prediction about the nature of the rapid evolution of this digital sandwich is called Moore's Law. It has held true since the 1950's. Moore, co-founder of the chip manufacturer Intel, observed that the number of transisters that can be placed on an integrated circuit chip was doubling every two years. In fact, the interval has varied from 18 months to 2.5 years. As good as chip design improvements have become, the increase in the capacity of other digital devices has come even faster. Figures would indicate that the doubling in mass storage capacity of hard drives is occurring almost every 12 months. In short, computer design has doubled the performance power of a computer at any given size about every 2 years for about the same cost for 50 years. There is also evidence that Moore's Law itself could undergo its own rapid exponential acceleration.

First, let's come to grips with what Moore's Law (webopedia, wikipedia) has been for a half-century, how this impacts a computer's function and what this might mean. This ever doubling capacity has also cut the cost of the computer components in half about every

two years, which

has

an impact on a wide range of computer technologies. Further, as chip components

shrink, the distance electrons have to travel has decreased making the chip

also faster. Kryder's Law predicts the same trends in hard drive storage space (Walter, 2006^). Hendy's Law shows the same trend with pixels per dollar in digital cameras (see clickable graph on the right), something anyone buying digital cameras has observed in the last few years. Our economy is increasingly based on this prediction remaining

true. That is, a central pillar of the world economy doubles in value for roughly the same cost about every 2 years. No single company or business in history has ever achieved that level of astonishing inprovement and profitability, yet the market as a whole has managed to do precisely that. This doubling is a kind of economic heartbeat around which the world's economy is increasingly built.

First, let's come to grips with what Moore's Law (webopedia, wikipedia) has been for a half-century, how this impacts a computer's function and what this might mean. This ever doubling capacity has also cut the cost of the computer components in half about every

two years, which

has

an impact on a wide range of computer technologies. Further, as chip components

shrink, the distance electrons have to travel has decreased making the chip

also faster. Kryder's Law predicts the same trends in hard drive storage space (Walter, 2006^). Hendy's Law shows the same trend with pixels per dollar in digital cameras (see clickable graph on the right), something anyone buying digital cameras has observed in the last few years. Our economy is increasingly based on this prediction remaining

true. That is, a central pillar of the world economy doubles in value for roughly the same cost about every 2 years. No single company or business in history has ever achieved that level of astonishing inprovement and profitability, yet the market as a whole has managed to do precisely that. This doubling is a kind of economic heartbeat around which the world's economy is increasingly built.

Whatever the size or speed of computer, all of the control and management of the computer is now done through "chips" or transistors of various materials and not all of them are made of silicon. The chips that are the CPU (central processing unit) and the memory chips are made of switches, assembled from very tiny transistors. Millions and billions of these transistors can be placed on fingernail size chips. These transistor switches are also known as bits which can be thought of as switches that are on or off. All computer directions and commands depend on the condition of a collection of these switches. Mathematically the switch is either a 1 or a 0. The mathematical concepts of base two or binary language and Boolean logic are used to control these switches through what is called low level language or machine language. The central processing unit of a computer makes its decisions by processing a set of bits called a byte at the rate of millions of decisions per second and then billions and higher; flops stands for floating point operations. By combining thousands of CPU's using parallel processing, the fastest computers, supercomputers, are also getting faster with the 2009 winner of the world's fastest clocking 1.75 petaflops (quadrillions) operations per second (Ogg, 2009^) and the 2010 winner a Chinese machine at 2.67 petaflops titled the Tianhe-1 (Perlow, 2010^).

In higher level programming languages such as Logo, Pascal, Fortran or C++, one programming term or command sets the status of a large number of these bits (switches). Rows and rows of these programming commands are used to create large collections of computer code referred to as programs or applications. If the programming code that was needed for your average word processor of today was printed out for display, it would consist of many millions of lines of code. These chips work with mechanical devices to handle the input and output of bits that the computer uses to direct its work. These mechanical devices include sensors, robotic devices, printers and disk drives.

As the size of the switch is decreased, computer designers can pack more computer directions into the same space, making it more powerful yet use less electricity. Intel announced on January 25, 2006 that it had produced a RAM (memory) chip with 45 nanometer logic design, reporting that the "45nm SRAM chip has more than 1 billion transistors" (TechTree, 2006^).The 45 nanometers refers to the smallest space between wires on the chip, a number that continues to fall. The limits of physics for wires etched on chips appears to be the approaching distance of 11 nanometer technology (Perlow, 2010^). Because of Moore's Law, computers have gone from requiring the space of a small building, to the size of a room, to the size of an item to fitting on a desk, to a lap and then to handheld devices sometimes called PDAs or Personal Digital Assistants or Smartphones, netbooks and touch tablets. Researchers and their skeptics have kept a running debate going on feasibility on nanotechnology for years (McGee, 2000^). Based on the research into the design of nanocomputers, the trend towards downsizing the computer is far from over. Nanotubes, made of carbon atoms, can be arranged in three dimensions, be 100 times as strong as steel, and be used for computer functioning. As an example of future designs, just one of the red blood cells in your body can be thought of as a tiny machine. The evolution of nanocomputer technology will enable us to build even better red blood cells or other microscopic machines that swim within the cell. The prefix "nano-" in this context is referring to a billionth of a meter, an area 3-4 atoms wide. When nanotechnology is fully developed, machines will be assembled and snapped together using the chemistry of atoms and molecules. Their assembly factory will be a test tube. To program a computer may come to mean the same thing as making a kind of computer, erasing the distinction between hardware and software.

The overall trend is towards the ever smaller from which we can predict even more tiny personal computing devices in the years ahead. Given the limited workspace of students in classrooms and their high degree of mobility, the ongoing development in handheld computers and smaller devices needs to be closely monitored by educators. A variety of computer technologies are already wearable (Wikipedia, wearable computers, 2008^). Chips, sensors, wiring and processors can now be woven into clothing. The time will come in the next ten years when the "form factor" or size and shape of the computer will be so small that where we wear the computer will become a major design issue. Will we want our voice activated computer to dangle from our ears, slide behind a belt buckle, or perhaps be implanted underneath our skin like heart pacemakers or reside in the surface of our skin like a tattoo? Such tiny computers would have to come with warnings about keeping them out of the reach of small children who might swallow them or how to do so safely. In the future the doctor may prescribe that we swallow thousands of nanocomputers floating invisibly in a spoonful of liquid in order to complete diagnostic lab work or to clean out a clogged artery.

Many have noted that current chip technology is approaching the limits of physics (Kanellos, 2003^). There is a finite point at which one cannot further shrink the size of transistors on a chip and still have them function. Unless nanotechnology or some other technology actually becomes operational, this trend will end according to some predictions around 2022 (Kaku, 2012^). If true, this could have a wrenching negative effect on world economy. Others argue that attempting to keep pace with Moore's law will bring its own problems (Malone, 2003^). Kurzweil (2001^) researched the longer view of information storage. He found that this information doubling can be traced to the late 1800's, that over this time four different technologies took turns at leading the effort of information storage then became obsolete with chip technology now being the fifth. He concluded that this trend of new technologies picking up the baton when the latest technology leader fades will continue for decades. The theoretical physicist Kaku though notes that no replacement technology for chips is yet ready to step in.

With IBM's announcement in 2010 of a computer system that merges photon computing with electronic computing (Perlow, 2010^), one of Kurzweil's predictions appeared to have come true. A sixth technology had emerged for encoding computer data and its optical computing. Having come to understand Moore's Law in terms of a 50 year pattern of doubling the capacity of computer chips about every two years, this new technology may change this fundamental definition. This next sixth phase of computer operation development could allow an even faster pace to Moore's Law. Praise for this development was significant. "This time, however, instead of just proving itself consistently correct, Moore's Law is going to have to be completely re-written — instead of microprocessor technology doubling its performance every two years, we'll be looking forward to ten to twenty fold increases in computational power, at a bare minimum, every five years. . . . IBM installs their Blue Waters supercomputer, which will be installed at the National Center for Supercomputing Applications (NCSA) in Urbana, Illinois in the Summer of 2011 which will be four times faster than the current world's fastest, the Tianhe-1" (Perlow, 2010^). IBM later withdrew their photonic computer from NCSA citing concerns about unexpected costs and complexity. Work with nanophotonic technology continues with the promise of 10 to 20 times improvement in computer communication ("Integrated Photonics", 2011^) and should eventually lead to replacing silicon within the CPU and computer memory as well.

Beyond optical computing, even later technologies are being researched and developed including biocomputers and quantum computers (Hillis, 1999^). The underlying technology for biocomputers is nanotechnology, building things atom by atom at the DNA level (Merkle, 2008^), also called 3-D molecular computing by Ray Kurzweil (2003^) or DNA computing. A DNA device designed to play tic-tac-toe and called MAYA has not been beaten (Hogan, 2003^). Research on carbon nanotubes, nanowires and nanoparticles may take another 5-10 years to perfect the precise placement needed for large scale manufacturing of DNA based computer processors (Ganapati, 2009^; Harvey, 2009^; Kershner, 2009^). Another option, quantum computers, would work with the forces that control the atom and the elements of the atom to solve currently unsolveable problems (Norton, 2007^). Such effort would toss aside classical physics for quantum mechanics, replacing the bit as the fundamental unit of memory (an on-off switch or 1/0 state) with a quantum bit or qubit. The qubit can serve as a 1 or 0 or both simultaneously (West, 2000^). Such future technical developments imply the not too far off creation of a world that is perhaps beyond our current understanding and at the very edge of our imagination today.

What once was a fairly steady line trending upward, will be shooting almost straight up as more as more of the electronics of computers are replaced by photonics working at the speed of light. A rocket ride of change is ahead in computer capacity as this new type of chip technology works its way downward from supercomputers to the personal computer level.

The Trend of Doubling Integration into Other Tools, Machines, and Cultural

Systems (N * 2)

The Trend of Doubling Integration into Other Tools, Machines, and Cultural

Systems (N * 2) The great, great, great, great, great, great, great grandfather computer chips of today's leading computer chips still outsell in quantity the cutting edge computer chips that are sold by the billion. That is, the 8080 microchip which was first built in 1973 and other older computer chips, sometimes called microcontrollers, outsold current microprocessor chips by a factor of four, selling over five billion in 2002. What could this high and increasing demand for "ancient" chips mean?

For a number of reasons, as these older microchip designs fell from their original and expensive cutting edge roles, they became easy and cheap to manufacture, just fifty cents or less for an 8088 microchip today. Today's cars, refrigerators, elevators, security systems and most everything electrical now include them in subcomponents by the dozens. As the price for microchips plummeted, their management and control functions were so useful that the chips were increasingly considered for integration into almost everything. "What's more, we haven't even spun out all of the potential applications of the 8080, Z80, or 6502, much less the 80386, Dec Alpha, or the first PowerPC" (Malone, 2003^). In short, the integration of computer technology into almost every aspect of our agricultural, industrial and cyberspace tools is doubling in tempo with the beat of Moore's Law. This in turn means that our cultural systems rapidly incorporate computer technology into our thinking, philosophy and psychology, just as happened with the introduction of writing into human culture.

The Trend of Fast and Increasing Pace (N2) in Cultural Change

The Trend of Fast and Increasing Pace (N2) in Cultural ChangeToffler observed (1970^) that cultural change in the information age was occurring faster than in earlier eras. More importantly he noted an important impact of such change. Sufficient rapid change produces cultural and personal shock, a summation that I will refer to as Toffler's Law. On the one hand, change leads to many opportunities for improvement. On the other hand, for those insufficiently prepared, encounters with a high rate of change can lead to a kind of mental shock, a kind of paralysis in ones ability to think and respond to the change.

A simple observation of such improvement is the computer's gradually increasing capacity to handle multiple media. As net speed increases a wider range of media is increasingly distributed over ever larger regions of the Internet. In turn, this means that composers can increasingly work with an ever bigger palette of options including text, still images, audio, animation and video. Yet among an audience with the greatest capacity to benefit from such difference, the print based nature of university "textbooks" have changed little over the last several hundred years. Toffler's law argues that the shock of change causes the intended impact of a technology to appear much later than generally expected. For example, for years after corporations had widely adopted hourly computer use by their employees, statisticians failed to find any positive economic impact of those computers. Today information technology is a central factor in economic growth.

Further cultural change is also driven by the exponential increase in the quantity and quality of information and its immediate availability through mobile information technologies from smartphones to other sizes of portable computers. The CIO of Google estimated that since the birth of the world and 2003, some five exabytes of information were created. Since 2003, the world creates 5 exabytes every two days (Evans, 2010^).

More importantly, the rate of change is accelerating. "At today's rate of change, we will achieve an amount of progress equivalent to that of the whole 20th century in 14 years, then as the acceleration continues, in 7 years. The progress in the 21st century will be about 1,000 times greater than that in the 20th century, which was no slouch in terms of change" (Kurzweil, 2003^). It takes time to adapt. School innovators incorporating information technology will require some patience as computer skills develop. But developing an educational system that thrives on such change and eliminates the problem of "change shock" becomes an increasingly important priority. Impossible? Should you question whether thriving on change is possible, spend some time with a three year old.

The Trend of Doubling Network and Communication System Capacity (N

* 2)

The Trend of Doubling Network and Communication System Capacity (N

* 2) Intimate partner to today's computer is the network. Said another way, the network grid is the newest computer. Home and business offices have moved from telephone based dial-up modems to many times faster broadband speeds that are delivered by a variety of telecommunications companies including cable TV, telephone and satellite companies. These telecommunication companies draw their bandwidth from even faster trunk lines. Networks began offering commercial rates for 10 gigabit (GB) ethernet connections using optical fiber lines in May of 2001. The good news is that there will be plenty of capacity for future growth. Bandwidth Scaling Law shows that network bandwidth is increasing at close to the same rate of doubling as the capacity of computer chips. Butter's Law of Photonics states that the quantity of data at the same price coming from an optical fiber is doubling every nine months. Best estimates into the foreseeable future are that this network trend will continue.

Networks with speeds of 100 gigabits and higher are being planned using fiber optics for the Internet2 system. Wireless cell phone systems including those from AT&T and Verizon, though many times slower, are completing their 3G networks which can transmit computer data at about 3.6 megabits per second, a vast improvement. However, the Swedish company announced the first wireless 4G cell phone system in December of 2009, offering speeds up to 80 megabits, 22 times faster than 3G (Bradley, 2009^).

Computers and their networks will continue to become much faster, cheaper and more powerful. The original approach to computer speed was to produce a single chip, the CPU, that could process data faster than the last design. The world's very fastest computers were referred to as supercomputers. A "high-water" mark of one billion floating-point operations per second was first used to help define the meaning of super-computer. That is of course a very old and outdated standard for supercomputers today. Personal computers that are faster than those speeds are sold in the consumer marketplace at standard PC prices.

With the advent of fast computer networking, another approach to faster processing of a task or problem has emerged, the team approach. A computer can assign different parts of the task to a team of different CPUs in the same computer or to different computers across a network to integrate the results at a particular computer. This is known as parallel and grid computing and using a very large number of computers for this work is referred to as massively parallel computing. Several projects are now underway which work on problems that use the idle time on thousands of computers scattered across the Internet.

Future revolutions in size and speed are likely to come from biocomputing, optical computing and quantum mechanics. Our computers are currently totally dependent on electrons to move bits of data around within the computer. Electrical storage of data may give way to the use of photons instead. That is, instead of electrical transistors, photo transistors would be placed in our "chips" as part of RAM and ROM. Instead of electrical wires, industry will increasingly use fiber wires for internal connections just as telephone companies are rapidly replacing their telephone trunk lines made of copper with glass fiber that transmits light waves instead of electrons. This will have a dramatic impact on the speed, capacity and size of the computers and their networks. For example, Motorola announced in September 2001 that it had solved a 30 year optical silicon chip puzzle on how to grow light-emitting semiconductors on a silicon wafer (chip material). This discovery integrates electron designs and photon designs through an intermediary chip layer that bonds well with both silicon and light emitters. Such work promises a ten-fold speed increase in computers when these designs reach the marketplace in the years ahead. In terms of speed, current conjecture is that computers will be hundreds of times faster than today's computers.

Perhaps as optical computer networks and systems advance, we will think of electron based information storage and transmission the way we think of the steam-age of motor cars, an interesting era that happened long ago. Educators in the public schools, which are generally hard pressed to have any or at least adequate computing and network resources, must strain a bit to imagine the world that is soon coming, a world where the vast majority of its citizens of any age have all the computing and communication power they can use, as often as it is needed.

The Trends of Increasing Rates of Social Interaction (N2)

(2N)

The Trends of Increasing Rates of Social Interaction (N2)

(2N) Reed's Law (Wikipedia, Reed's Law; Reed, 2001^) and Metcalfe's Law (Metcalfe, 2000^, 2007^; Wikipedia, Metcalfe's Law) predict the increasing value of computer networks as the number of network participants and networked devices and the number of network groups increase. Metcalfe's Law says the "total value of a communications network grows with the square of the number of devices or people it connects (N2)." Reed's Law observes that the number and value of group-forming options grow exponentially as the N or number of people in a network increases (2N). Further, Reed's Law would indicate that the value of group forming options grows even faster than the growing value of the network itself. (See spreadsheet comparison.) Moore's Law and the Bandwidth Scaling Law say that the difficulty of acquiring computer technology and getting adequate computer network bandwidth will continue to drop. That is, our ability to build both local and global problem solving networks will continue to become easier.

It is intriguing that at the same time these trends were beginning to shoot upward, educational researchers were advancing the concepts and methods of cooperative learning. Led by David and Roger Johnson's research of the 1980's long before the concepts of the Internet and the World Wide Web were commonplace, a new perspective was emerging on organizing learners for educational effectiveness. The concepts of cooperative group behavior (Johnson et al, 1985a^, 1985b^; Johnson, 1994^; Johnson, D. W. & Johnson, F. P., 2003^) merge well with these previously discussed long term trends. Educators that re-examine the cooperative ideas that grew from Johnson and Johnson's study and look for ways to apply those group and collaboration skills using the emerging communication tools of an increasingly networked world will develop new models that will not only revitalize education but lead community and economic development as well. Such Web based developments have been studied and reported on extensively by Shirky (2009^, 2010^).

The good news for educators is that real skills with using the Internet and the formation of groups using computer networks will contribute to significantly improving two of the most important factors in learning, the rate of interaction and the depth of interaction. Educational interaction includes many factors, including students, curriculum, parents, teachers, administrators and more. The bad news for educators is that K-12 students seldom are taught network interaction applications let alone use computer networking tools in school (e.g., email, Instant Messenger chat, newsgroups, peer-to-peer networking and so forth). Instead this activity goes on in homes of those connected to the Internet without educational guidance or improvement. If anything, this knowledge, which is critical to current economic growth, is too often seen as frivolous or dangerous for school productivity. At the same time, the need for this interaction knowledge is increasing exponentially.

Taken together, the network lever of bandwidth scaling law along with Metcalf's law and Reed's law encourage and improve socialization and collaboration, both of great value to educators. Vygotsky's work and the constructivist movement in education have long recognized the social nature of learning. Further, the global nature of the Internet has allowed teams to form across vast distances of geography and time. Collaboration is also increasingly recognized as a critical aspect of creativity and innovation (Paulus & Nijstad, 2003^; Sawyer, 2007^). The fruits of such change are readily visible. Friedman's (2005^) hypothesis that the "world is flat" comes from his research on the resulting radical change in the nature of economic competition and collaboration on a global scale from 1989 to the present. The creative nature of digital collaboration is one more lever that accelerates change.

The Trend of Increasing Information in Storage, Communication and Processing (N * 2)

The Trend of Increasing Information in Storage, Communication and Processing (N * 2) The management of information (ideas) is one more of the many 21st century exponential trends. As a concept, information has a number of interesting characteristics that are transforming the 21st century. The explosion of information has also created a series of series of "information induced gaps that create serious challenges for society, educators and students in classrooms" (Houghton, 2011).

In a series of books, the Tofflers (1980, 1990, 2006) and Castells (2000, 2010) established the idea that knowledge (information) had become more powerful than wealth which in a preceding era had grown more powerful than the physical power that drives agriculture and police and military systems. Information management consists of 3 major divisions: storing (reaching across time, 23% growth per year); communicating (reaching across space, 28% growth per year); and computing (processing, 58% growth per year) (Hilbert & Lopez, 2011). However, unlike prior sources of power (money and force), information has the unique economic property of being "non-rival". That is, taking and using my dinner plate prevents me from using it but taking my idea and using it does not keep me or an infinite number of others from using it as well. Said more elequently by Thomas Jefferson, "...he who lights his taper at mine, receives light without darkening me" (Jefferson, 1813).

In one step along the information age transformation, from 1950 to 1980 manufacturing goods had been eclipsed by information management as the dominant economic activity in the world. The tipping point in information storage occurred in 2002 when more information was stored digitally than in analog format. In 2000, 75% of the world's information was still in analog format (paper, videotape, etc.) but by 2007, 94% was preserved digitally (Hilbert & Lopez, 2011). In spite of the 23% annual growth in data storage, scientists have "recently passed the point where more data is being collected than we can physically store. This storage gap will widen rapidly in data-intensive fields" (Staff, 2011). This storage gap is well established in broadcasting. In 2007, the 1.9 zettabytes being broadcast per year was 6.5 times more information sent than the 295 exabytes being stored (Hilbert & Lopez, 2011). An exabyte is billion gigabytes or a trillion megabytes.

The Hilbert & Lopez study (2011) covering the period from 1986 to 2007 was the most significant analysis of the world's capacity for information management to that date. Their article in Science is just one of a large set of articles in a special edition of the weekly journal, Dealing with Data. Reading text about numbers that are so beyond the scale of everyday human comprehension might require some different perspectives provided by using other media.

This is a PBS radio broadcast interview with Hilbert. Click the image twice, once below and then the play button in the audio player on the next page.

Below is the USC TV interview with Hilbert that was presented on the national cable TV network MSNBC.

Why is pondering the increasing deluge of data important? As a matter of educational policy, and considering just the data that is being stored not broadcast, there is a significant shortage of analytical minds that can keep up with and sift this explosion of data to comprehend it (Staff, 2011), let alone to find ways to explain it to others and apply it usefully to human and planetary improvement. Consequently, we need far more students with the talent to use higher order thinking skills to use the information to good import.

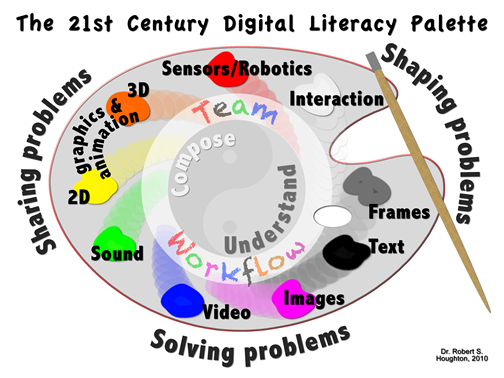

Further, we will need far more students capable of expressing ideas in the wide variety of media being used on the Web to support that explaining and higher order idea processing. To compose anything in any media requires processing information. The information explosion created the need for the Internet and World Wide Web which in turn radically diversified the means of expression or composing. The digital palette on the right represents the range of options currently in widespread use on the Web used to deal with or help comprehend the data explosion.

Further, we will need far more students capable of expressing ideas in the wide variety of media being used on the Web to support that explaining and higher order idea processing. To compose anything in any media requires processing information. The information explosion created the need for the Internet and World Wide Web which in turn radically diversified the means of expression or composing. The digital palette on the right represents the range of options currently in widespread use on the Web used to deal with or help comprehend the data explosion.

Of special interest to education are the developments with the traditionally valued systems of publishing (books, newspapers and journals), television and radio. The use of these one-way forms of communication was a relatively flat 6% annual growth rate in comparison with the 28% annual growth of bidirectional telecommunication (e.g., telephone, smartphone, computers and the Internet) (Hilbert & Lopez, 2011). This indicates a clear need for students to spend far more time improving their skills with two-way systems of communication and storage than with paper technology.

What is needed next is a study of the percentage of time school students spend with and in the study of managing information in paper systems and time spent with digital systems. Because of the lack of computers in classrooms, the simple conjecture is that the vast majority of a student's American school classroom time is spent in paper based systems, which would indicate a massive misalignment with global, and in particular, United States of America economic reality. Public school funding is so constricted for digital technnology that the primary tools currently available to schools are for a world that began rapidly disappearing for adults in the growing part of the economy over 25 years ago. If schools put an accent on questioning and higher order thinking skills, however, students will have foundational skills with real relevance for the digital world.

The exponential upwarding curving nature of the data trends indicates that there is a new world arriving at the pace and force of a tsunami.The Web economy that has emerged feeds on this data explosion, and those who wish to have jobs to feed themselves and their families would be wise to examine what kind of learning this requires to keep their resumes current and what kind of K-12 curriculum this requires to prepare their children for the future.

The Law of Accelerating Returns [V = Ca * (Cb ^ (Cc * t)) ^ (Cd * t)]

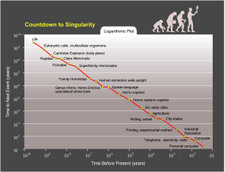

The Law of Accelerating Returns [V = Ca * (Cb ^ (Cc * t)) ^ (Cd * t)]Inferences use historical data to predict. One of the most novel hypotheses based on computing technology trends was done by the mathematician Vernor Vinge. His inference thinking (1993) led to his prediction of a "technological singularity", a technological change so rapid and profound that it represented a transformation that breaks with known historical patterns, something comparable to a caterpillar which transforms into a butterfly yet occurs to human life itself. This thinking about a forthcoming transformation of human biology and intelligence in the next few decades was further researched and extended by Ray Kurzweil, forming the Law of Accelerating Returns.  The above formula that was derived from this research "is a double exponential--an exponential curve in which the rate of exponential growth is growing at a different exponential rate" (Kurzweil, 2001). Further, the data from both thinkers would suggest that this event will occur in the lifetime of many who are now reading this sentence. Click the thumbnail picture on the right for the full-sized chart of some of the data points in just one of his charts.

The above formula that was derived from this research "is a double exponential--an exponential curve in which the rate of exponential growth is growing at a different exponential rate" (Kurzweil, 2001). Further, the data from both thinkers would suggest that this event will occur in the lifetime of many who are now reading this sentence. Click the thumbnail picture on the right for the full-sized chart of some of the data points in just one of his charts.

Inferences are just predictions, but the better ones are hunches based on known data. The concept of a technological singularity transforming humanity has become the basis for much discussion and debate over the degree of possibility and the value of such an event. Those interested in further evaluating Kurzweil's numerous data points, trend charts and heavily referenced thinking should read his 58 page essay, the Law of Accelerating Returns. Information technology is just one of the emerging technologies that are used to support the idea of transhumanism. The World Transhumanism Association defines it in part in this way: "The study of the ramifications, promises, and potential dangers of technologies that will enable us to overcome fundamental human limitations, and the related study of the ethical matters involved in developing and using such technologies" (WTA faq, 2006).

Computer technology has been the underlying technology that has enabled and/or accelerated a set of other emerging technologies. This collection of emerging technologies are referenced by a series of acrononyms that one may encounter in current readings. These include: GRIN (Genetic, Robotic, Information, and Nanotechnology); GRAIN (Genetics, Robotics, Artificial Intelligence, Nanotechnology); BANG (Bits, Atoms, Neurons, Genes); GNR (Genetics, Nanotechnology and Robotics); and NBIC (nanotechnology, biotechnology, information technology and cognitive science).

Are there fundamental concepts that would undermine the "always progressing" technological determinism implied by the thinking of Vinge and Kurzweil and many supporters of transhumanism? This thinking now turns to nonlinearity. Will there always be surprises no matter how powerful computing capacity becomes?

The Trend of Unpredictability [ RX(1-X) ]

The Trend of Unpredictability [ RX(1-X) ]Exponential curves do not always go up. Unpredictability emerges in different ways in different aspects of our culture. This includes use of computers, in debugging them and in depending on their inference producing potential for accurate predictions. There is deep irony here.

The conceptual framework for the design of computers began with the need of a more reliable mathematics calculation machine for everything from more accurate tables for ship navigation to better predict location. One of the motivations behind the first mechanical computer was the need to eliminate human error in mathematical calculations. One motivation for early computer design was long range weather prediction. Along the way, the former problem of error elimination encountered the problem of complexity. The latter problem created problems with predictability which in turn stimulated a transformation of understanding. All are fundamental problems. Each helps us understand the limits of computing and mathematical power.

These fundamental unsolved problems have quite a history. Long before the invention of computers, errors in basic addition and subtraction found their way into tables used in ship navigation and finance which caused further errors in larger social systems. There was also great need to speed the calculation process. Computers have handily addressed these initial problems of several centuries' vintage. However, as computer programs grew in size and complexity and became connected to computer networks, it became impossible to totally debug them.

The deepest of ironies however was related to fundamental mathematic and scientific understanding. Computers were essential to discovering nonlinear mathematics. This branch of mathematics finally unraveled the hope of producing reliable long term prediction in a multitude of subjects that mattered most, not just for computing but for science and mathematics as well (Houghton, 1989). The problem of predicting the weather days, months or years in advance is a classic example of the issue, a problem that persists in spite of extensive knowledge of weather science and computing power. The problem is not isolated to weather but occurs in general with important problems in physics, chemistry, biology and sociology.

Software Design: Bugs and Complexity

Faster and cheaper computers do not necessarily mean smarter and better performing computers. Computers are dependent on software. As valuable as computers have become, our inability to thoroughly debug them has become a major source of computing unpredictability (Cooper, 1995; Cooper, 1999; Festa, 2001; Kaner & Pels, 1998; Levinson, 2001; Mann, 2002). Wirth's Law that "software decelerates fasters than hardware accelerates" points to the problem of scale as programs become larger and thereby more complex. Beyond accidents of comission or omission, another element of this unpredictability is the danger of intentional effort to do harm. There is an element seeking to crack security codes and to change computer applications to force them to do something other than what the user of the application is led to believe it will do, to cause the software to do damage to a person, machine or an economy. This creates a kind of constant evolution in an applications code to defend against the latest innovation of attack.

Computers can be just as unpredictable during insignificant moments as during our most significant need for them. Failure can occur in more subtle ways than a hardware crash. Sometimes the best designs are error prone and remain so until better technology is available. For example, the first "bug" in a computer was a literally a moth trapped between the points of Relay #70, in panel F, a problem that no longer exists now that the circuitry is embedded in a chip.

Sometimes failure is one of insufficient design thinking. Computer software too often include features that are hard to use and confusing, which causes human mistakes. Further, the computer software code in too many programs has become so large and complex that the average computer application program and its interaction with the operating system cannot be thoroughly debugged and never will be. Also, the complexity of the code running computer networks and their openness to so many users creates additional vulnerabilities from viruses and other intentionally misbehaving code which can bring down or hobble a computer and its network. Designers must work from these observations and build systems that expect imperfection and do a better job of handling it.

Newer operating systems and applications should be getting better at this over time. However, designers have some distance to go in making computers match the reliability of other common but simpler devices such as a toaster or a TV. The release of the Windows XP operating system is a telling example of current practice. "Microsoft released Windows XP on Oct. 25, 2001. That same day, in what may be a record, the company posted 18 megabytes of patches on its Web site: bug fixes, compatibility updates and enhancements. Two patches fixed important security holes. Or rather, one of them did; the other patch didn't work" (p. 34, Mann, 2002). Such problems are common across the software industry. In a multiyear study of 13,000 programs by Humphrey of Carnegie Mellon, the research showed that "on average, professional coders make 100 to 150 errors in every thousand lines of code they write" (Mann, 2002). Finally, there is a certain economic incentive in releasing software programs before they are fully tested. This allows the software company to appear to stay ahead of or up with competitors and lets consumers debug products through their complaints, while the same consumers pay maintenance fees and "incident support" for resolving problems with what they bought. Many software engineers say that software quality is not improving. Instead, they say, it is getting worse.

Software failures due to familiar, readily prevented coding errors, have cost companies and governments tens of billions of dollars a year (Levinson, 2001). When computers become part of other machines, including X-ray equipment, weapons, passenger jets and cars, software failures in these machines have also led to deaths. Some programmers have concluded that until software firms start facing and losing significant product liability lawsuits, software will not improve. So far, software firms have used their software license as a shield against lawsuit. How long will this continue?

The latest evolution of this problem is occurring in cars. Cars were first made more reliable, less costly and more maintainable by replacing the cable of wires that ran to every electrical device with a computer network which enabled a single wire to connect and manage all the items. In a later step, manufacturers began replacing mechanical controls for brakes, gas pedals and steering wheels with a computer that manages the required controlling motor, a development sometimes called fly-by-wire or drive-by-wire. Investigation into car accidents caused by the gas pedal randomly accelerating on its own is leading consultants to point to software as a culprit, either as a result of programming error or being susceptable to outside electronic interference. Apple co-founder Steve Wozniak reported that his Toyota Prius was accelerating in a particular cruise control setting without his foot anywhere near the gas pedal (Wozniak, 2010). "Antony Anderson, a U.K.-based electrical engineering consultant who has testified as an expert witness for plaintiffs in lawsuits, said any federal rule for brake override systems should ensure that the units aren’t run by the computer controlling the electronic throttle system. A case of sudden acceleration may be caused by electronic interference, so brakes guided by the same computer might not work, Anderson said. “If the electronics have malfunctioned, the software is in disarray,” he said. “It won’t accept an additional command.” " (Green & Fisk, 2010). Though every computer user has learned to deal with their desktop computer's software freezing up, it is quite another thing to address buggy code doing 70 miles an hour. Computer systems used in dangerous situations must include fail safe interrupts that provide protection until the computer can be fixed or restarted.

Even if programmers did not make basic mistakes in their code, there is still the problem of programs becoming so large and complex that no single person can examine and understand all of the possible problems of interaction that may occur between different interacting elements of the program and points of interaction with input from outside the program. In short, our computers and the software applications that run on them do not and cannot live up to the ideal of the flawless computer operations running flawless logic. Since both can be easily flawed, computers fail or crash far too frequently and at times in which that failure can have a major impact. Software designers can not test every possible interaction of their code with reality. At some point after they have hopefully given it their best effort, it is released for sale. This means that anyone using a computer has become part of a giant experiment to discover and report bugs in computer applications so that the next version of the program can remove them or better address them.

May's Law

Software failure however, in spite its importance, pales in significance in comparison with the more fundamental forces of unpredictability. The goal of long term predictability had been kept alive since the mathematical work of Isaac Newton in the 1600's. Newton's belief was in turn spurred on by Laplace (1749-1827), a brilliant French mathematician, and Von Neumann, an early designer of the architecture of the modern computer in the 1950's. In contrast, May's Law (or May's equation RX(1-X)) is perhaps the simplest example of the many nonlinear models that put severe limits on such scientific pursuit, even though the perception of long term predictability lingers in social, educational and political circles. The graph on the left is but one example of the many types of trends such a simple equation can produce. It should also be noted from this basic equation that nonlinearity's uniqueness does not come from complexity but from the nature of its simplest interactions. At a certain threshhold of growth, tiny changes can build on each other to create radical and unpredictable change. May's simple mathematical expression is but one example which demonstrates that key chemical, biological, physical and social systems important to science and our culture diverge unpredictably and exponentially with time. This phenomena plays out repeatedly on the stock market in ways that continue to defy understanding (Mandelbrot & Hudson, 2004) in spite of copious amounts of data and deep knowledge of past patterns. Better and faster computers will not and cannot change this.

In contrast, May's Law (or May's equation RX(1-X)) is perhaps the simplest example of the many nonlinear models that put severe limits on such scientific pursuit, even though the perception of long term predictability lingers in social, educational and political circles. The graph on the left is but one example of the many types of trends such a simple equation can produce. It should also be noted from this basic equation that nonlinearity's uniqueness does not come from complexity but from the nature of its simplest interactions. At a certain threshhold of growth, tiny changes can build on each other to create radical and unpredictable change. May's simple mathematical expression is but one example which demonstrates that key chemical, biological, physical and social systems important to science and our culture diverge unpredictably and exponentially with time. This phenomena plays out repeatedly on the stock market in ways that continue to defy understanding (Mandelbrot & Hudson, 2004) in spite of copious amounts of data and deep knowledge of past patterns. Better and faster computers will not and cannot change this.

Without computer technology, researchers had been unable to see that the impact of nonlinearity is everywhere. Neither had they been able to describe its nature, a turbulence that defies long term predictability yet produces phenomena which can be predictable over the short term. Weather is a classic example with which all are familiar. Predicting an afternoon without rain weeks ahead for a picnic day cannot be done, but once a thunderstorm emerges, its track and duration can be predicted with some short term reliability. Such self-forming phenomena emerging from nonlinear and unpredictable backgrounds are called solitons in physics and in other places referred to a singularities. Nonlinear science would indicate that the emergence of Kurzweil's transforming singularity in human culture is not impossible, but much less likely than the Law of Accelerating Returns might imply. The unpredictability of the dominant form of system behavior, nonlinear systems, makes it impossible to predict just what the future will hold. Bugs in computer systems compound the problem but even computing perfection cannot solve it.

Nonlinear systems and their unpredictability have long been known to play an important role in every major field of study. Such knowledge is still only partially assimilated into our psychology and philosophy and largely absent from educational theory, curriculum and many educational products promising a certain level of guaranteed results.

The unpredictability of the world plays an important role in the need for creativity and instruction in being more creative, creativity in developing new products and new ideas for dealing with some new problem or challenge or finding better ways to deal with known issues. The several previously discussed exponential trends contribute heavily to the resources used in creating new products and solutions. So many new products and solutions are appearing, that excitement and exaggerated statements about the cure, product, curriculum, lesson plan or solution can often lead to beliefs that are not true or not yet true about their capabilities. Exaggerating claims in advertisement and marketing is an accepted part of our culture. There is a cycle of idea testing that all of us experience in the fast pace of 21st century culture. The cycle has several stages:

The problem of sifting innovation claims became so great that research businesses have formed to sell advice in measuring those product claims. One of those consulting companies is Gartner, Inc which specializes in information technology research. They invented the Hype Cycle graph to track and communicate their view of the progress of innovative technology ideas and their ability to survive the Hype Cycle path to reach some level of real and predictable value over time ("Hype", 2012).

Attached to the graph are the names of various information technology concepts that often represent a group of similar but competing products from many companies ("Hype", 2012). Some ideas may be more familiar than others and the shown product concepts are representative, not a comprehensive listing of all information technology ideas, which would not fit on the graph. More current graphs on different topics can be found by searching for "hype cycle" and year and sometimes by a topic area such as education or government. The hype cycle concept easily applies to far more than information technology. Where such graphs are not available for a given topic, it is an interesting task for a team of thinkers to create their own hype cycle for their field of interest.

We've all heard those questions or statements. "Isn't X cool? Isn't this the greatest product ever? This will solve your problem." Learning all the technology concepts on the chart is not its point here and is perhaps not the chart's greatest value. The divisions of the graph give us a way to recognize that effective change and improvements take time and testing. It is also a reminder to think critically as we explore the usefulness of some idea as the next "new idea" keeps arriving. There is a more important question to ask. How good is the proof of its value? When the idea has proved its usefulness, when it has sufficiently stood the test of time, then it is time to think about the changes needed for its broader adoption and use. When change is forced on users without the proof, it's time to create new experimental data about the effectiveness of the product or idea.

In 1939 shortly before the start of World War II, Harold Benjamin wrote a a witty, satirical commentary, a now classic piece of educational literature titled The Saber Tooth Curriculum. As its publication date is nearing 75 years, it will soon fall into the public domain, at which point all interested in education should download it to their digital reader for free. The tongue-in-cheek work was a criticism of the resistance to change of educational systems and the legislatures that manage and fund them. There are seemingly endless shorter adaptations of this 182 page work that can be found by googling its title, Saber Tooth Curriculum. One of the shorter versions is this 4 minute Vimeo video. Watch it.

The Saber-Tooth Curriculum from Jaydi on Vimeo.

As with the era of Saber Tooth Curriculum, whether welcomed or not, change happens. Though many good ideas have weathered the test of time, the problems and trends discussed above indicate the accelerating and transformational nature of 21st century change. The pace of change is now such that cultures need educational systems that lead change not chase it, that help a world culture learn to deal with its increasingly innovation dependent character. Unfortunately, schools that are largely optimized to produce masses of students for a more modern version of the factory culture of the late 1800's face an employment and economic scene that needs creativity, entrepreneurship and problem solving skills. The struggle for such schools to emerge is painful to watch. Have the colleges of education that educate the nation's teachers become participants in perpetuating the curriculum of today's school systems without showing how to escape it? How does an institution prepare teachers to prepare students for a future reality without locking them into the current history of educational practice?

Educators are key members of the change agent community which leads change. Solutions to problem situations that lack change or solutions to changing situations are innovations. Innovation is is simply change that is new to us. The ERIC database of educational literature can quickly lead to thousands of articles on innovation, change agents and diffusion of innovation theory that have been already published. This specialized vocabulary that has built up around the nature of change can lead to ideas that have proven useful in encouraging and dealing with the results of the changes being discussed. But the change problem is not just about appropriate teaching and administrative methodology. As Toffler noted with his publication of Future Shock (1970^), change also has many pyschological implications with which educators must contend. These thoughts address the more specific innovations of the 21st century and the issues they raise for change and innovation theory.

The issue of change is also impacted by the degree of change and our history of experience with change. Our basic experience with the seasons and changes in the weather leads to an expectation that today's weather will be a little colder or a little warmer than the previous day or month depending on the time of year, a kind of change called incremental change. This pattern of incremental change is common and applies to many things around us and sets expectations about the degree of surprise that change can bring. Yet we are not unfamiliar with exponential change, sudden change that surprises us with the degree of change, also called exponential growth or change. Examples include tornadoes, floods and hurricanes in the weather and with revolutions in social systems.

Our children and citizens need to experience many examples of the power of additive change and the power of exponential change. Here's one very personal way to better understand the difference; experiment with your bank account. Instead of adding one penny to the bank account each day, try doubling the number of pennies each day and see how long that can be sustained; see the attached Excel spreadsheet to experiment with the results that show the difference between additive and exponential growth. A sustained change that at first seems insignificant can rather quickly become a surprising danger or opportunity.

Educators must find a way out of the box in which they are placed. Whether it is the result of responding to accelerating trends and unpredictability, or leading learning activities, educators are deeply invested in helping people to change and to deal with change, but there are not simple rules on how to do this. There is a much deeper discussion of the philosophy of change (Mortensen, 2006) which recognizes that the nature of change is still not completely understood and what is known is not widely practiced. As teachers exist to create and facilitate change in people's lives, examining the nature of change requires thinking beyond the digital trends. How intriguing that our species continues to make computer tools for thinking and keeping up with change, tools that are imperfect, yet functional and of such importance to new knowledge and understanding that great effort is still spent in using and improving them. Yet software and hardware updates to such technology do not arrive every hour. Updates are spread out over many months. In general, there is much about one day or one year that is just like the last.  This poses two major problems for the change challenge and raises a small pile of questions. How much change is really happening?

This poses two major problems for the change challenge and raises a small pile of questions. How much change is really happening?  Further, how difficult or easy is it to handle even simple changes? What is essential to enabling change?

Further, how difficult or easy is it to handle even simple changes? What is essential to enabling change?

How great and how uniform is the change challenge? Does one of these pie chart graphs best represent the current cultural situation across the range of human cultures and settings? If not, an Excel file with a graph of these change percentages is available to experiment with different views of the nature of change; just change the numerical data and the graph automatically adjusts. Measuring the change challenge from one individual or one country to another must involve significant variation. How much change do you expect in your world by tomorrow? In four years? In eight? How much change will occur by high school graduation for today's kindergarten student? How well does and should our educational system prepare us for what Piaget (1969) referred to as accommodation, in this case the increasing change in understanding needed for the 21st century trends of both rapid change and wrenching unpredictable adjustments? Coming to terms with some measure of how much change needs to be addressed is an important step in planning curriculum for the future.

The challenge is to use our thinking tools to understand and to teach how to thrive on increasing change, diversity and unpredictability. It can be done. After all, every living organism has mastered this to some degree. Yet adaption to change and the need for change have proven difficult for human beings. Changing human behavior to confront even known and directly personal problems is difficult. One assumption is that failure to change is due to a lack of information, that change in behavior happens because a good analysis of the problem has occurred and that those involved have accurate factual information that they understand. Though having information helps, in fact, even if adequately informed and the analysis is clear, only 1 in 10 people dealing with severe life-threatening illnesses from smoking related cancers to weight driven diabetes will end the habitual yet highly avoidable behavior that caused the problem in the first place (Deutschman, 2005). In spite of the significant evidence of the short and long destructiveness to themselves, friends, acquaintances and their community, dangerous habits continue, e.g.: smokers keep puffing; over-eaters keep indulging; and heavy drinkers keep drinking. Threat, even severe threat, even the real threat of loss of life, is often insufficient to cause a change habits for many.

Deutschman noted three elements that have proven effective in building the capacity to change (2005).