|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|||

"Any sufficiently advanced technology is indistinguishable from magic." Arthur Clarke, (1962). Profiles of the Future.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|||

"Any sufficiently advanced technology is indistinguishable from magic." Arthur Clarke, (1962). Profiles of the Future.

In seeking to understand the elements of a computer and their functioning, they can take on a magical character. Equally magical is what they can do in the world with the right commands. The Harry Potter series of books and movies by J. K. Rowling has this educationally delicious metaphor for creating change, the magic wand. Simultaneously utter the right formula and wave the magic wand with the right touch and change happens. Reality, of course, is somewhat different, though the growing capacity of our computer designs is approaching the size though not quite yet the shape of a magic wand. Understanding how our current digital "wand", the computer, is created and shaped helps dispel any myths about its nature. In getting past the sense of magic about computers, we better understand the real-world role we must play to use this digital tool to create effective and supportive changes in the world around us. In fact, there is not "a" digital wand but multiple types of computers and connected devices, as the pictures above show. They have all become "personal computers" in that a high percentage of citizens now own or share the capacity of each of these types. They range from from giant room size (supercomputers) to desktop/laptop size to voice recognition driven pocket computers (e.g., iPhone 4S) to waveables (e.g., Wii), but each shares the same underlying 3 categories of features. By learning a device's specialized "spells" (commands and procedures) and how to apply them at the right time using the touch of the right computer system, real change also happens.

In seeking to understand the elements of a computer and their functioning, they can take on a magical character. Equally magical is what they can do in the world with the right commands. The Harry Potter series of books and movies by J. K. Rowling has this educationally delicious metaphor for creating change, the magic wand. Simultaneously utter the right formula and wave the magic wand with the right touch and change happens. Reality, of course, is somewhat different, though the growing capacity of our computer designs is approaching the size though not quite yet the shape of a magic wand. Understanding how our current digital "wand", the computer, is created and shaped helps dispel any myths about its nature. In getting past the sense of magic about computers, we better understand the real-world role we must play to use this digital tool to create effective and supportive changes in the world around us. In fact, there is not "a" digital wand but multiple types of computers and connected devices, as the pictures above show. They have all become "personal computers" in that a high percentage of citizens now own or share the capacity of each of these types. They range from from giant room size (supercomputers) to desktop/laptop size to voice recognition driven pocket computers (e.g., iPhone 4S) to waveables (e.g., Wii), but each shares the same underlying 3 categories of features. By learning a device's specialized "spells" (commands and procedures) and how to apply them at the right time using the touch of the right computer system, real change also happens.

This creation of tools to serve our commands goes back millions of years. Humankind has constantly innovated in developing and using tools to increase its leverage for solving problems, whether increasing the mechanical leverage of our muscles or the intellectual leverage of our brains. From the creative use of the simplest machines such as stone age blades to levers and pulleys to solar system spanning satellites, better ideas, new materials and new technologies constantly emerge for new problem processing purposes. Computing devices are no different. As with each of the other generations of thinking developments, this digital fourth generation of thinking tools will drive transformations of the educational system. That is, digital system designs are a symbiotic part of a larger human system, a digital system that must evolve in tandem with human evolution or there is no market for its advances. That is, computing advances ultimately depend on advances in our educational systems, in our ability to use our intellectual tools to real advantage. Having a basic understanding of how computers function is essential for not only advancing education, but enabling educators to advance how computers can help civilization progress. A better computer operator can not only use its current tools more wisely but can direct the making of better tools as well. The right commands with the right type of "wand" will have a powerful impact.

In this digital computer era, computer hardware design continues to shift through the use of different technologies to hold and process the most basic element of computer information, the bit, a one or zero. A bit can be thought of as a light that is either on, a one, or off, a zero, or it could be be thought of as a gate that is either closed, a one, or open, a zero. A row of bits, or many rows of bits, make patterns of ones and zeros that hold information, holding and processing everything the computer can display. Can you think of simple patterns of ones and zeros that might hold information? For example, 00000100 might stand for the letter A and 00000101 might stand for the letter B or the color red of a pixel on the computer screen, or a particular pitch of sound. By the year 2007, such digital patterns of bits held over 94% of all the world's information, and growing.

Currently computers hold and process these patterns using transistors, switches representing ones and zeros. A transistor is a kind of electron gate or electronic switch. The first transister (the picture on right from a video by engineerguy) was a chunk of germanium (a semiconductor) and a special pattern of electrical parts invented in 1947 to replace the unreliable vacuum tubes then in use for holding the value of a bit. The first integrated ciruit was invented in 1958 and contained within it just a few transistors and logic gates. As with the pictures at the top of this page, the technology required to hold and pass the state of a one or zero (a bit) in an active computer has shrunk using ever finer integrated circuits (ICs). Today, tens of billions of transistors can fit on on a piece of silicon the size of a fingernail to make up computer memory and its control logic and decision making switches. Further, trillions of magnetic particles on a hard drive can be set to the same patterns of ones and zeros.

Currently computers hold and process these patterns using transistors, switches representing ones and zeros. A transistor is a kind of electron gate or electronic switch. The first transister (the picture on right from a video by engineerguy) was a chunk of germanium (a semiconductor) and a special pattern of electrical parts invented in 1947 to replace the unreliable vacuum tubes then in use for holding the value of a bit. The first integrated ciruit was invented in 1958 and contained within it just a few transistors and logic gates. As with the pictures at the top of this page, the technology required to hold and pass the state of a one or zero (a bit) in an active computer has shrunk using ever finer integrated circuits (ICs). Today, tens of billions of transistors can fit on on a piece of silicon the size of a fingernail to make up computer memory and its control logic and decision making switches. Further, trillions of magnetic particles on a hard drive can be set to the same patterns of ones and zeros.

The overall size of the technology making those switches or bits has not only shrunk but increased the speed, capacity and adaptability of the computer. To hold those bits of information computer designers used vacuum tubes in the 1940's, then later electronic switches (transistors) etched into silicon chips that hold electrons; the bits were stored for the long term in magnetic particles on spinning hard drives. Next generation research has already produced photon gates and single atom sized gates. But no matter the size of the device, computers all share a basic underlying structure of three major design elements which these thoughts will explore in greater detail: the CPU (processing), RAM/ROM (active memory) and I/O (input and output devices).

When the on-switch of a computer is pressed, the initial loading of the computer's operating system, comes in two parts. Though small in comparison with the operating system, the computer's ROM chips contain a set of instructions that first tell the machine's parts to wake up and then to find and load into computer memory its upgradeable operating system which then drives the functioning of the CPU, RAM/ROM and I/O. This system can then support an additional layer of specialized applications that do such things as provide video editing or playing and enable Web page searching and reading as well as millions of other functions.

All of these parts must run as a highly integrated team. As the computer is operated one might imagine the insides of the computer as a series of conveyer belts carrying information between the CPU (central processing unit), high speed RAM and lower speed long term storage. Each of these conveyer belts runs at different speeds because the different components that the conveyer belts connect have different capacities. For example memory chips move information around thousands of times faster than the hard drive can move data. Computer network connections generally run even slower than the hard drives, but not always. Speed up the CPU or other parts and all the conveyer belts will need an upgrade as well to take advantage of the speed. Consequently, you cannot stick a new CPU in an old computer and have it run faster without changing many other things. Put another way, there is no advantage to having your heart beat faster than the body's circulation system can move the blood around. The bits of a computer are the blood in its system. This is why it is generally cheaper and more effective to buy a new computer every few years than try and keep upgrading an old one.

Educators' careers are already tightly integrated with the processing (understanding and composing) and management of information. In 1986 less than 1% of the world's information was digital; by 2007 over 94% was digital with stored digital information expanding at the rate of 23% a year (Hilbert & Lopez, 2011^). The knowledge explosion and its digital nature is very real (Houghton, 2011^). Though public school's digital resources have lagged far behind the general culture, it is both natural and critically important that educators follow the trajectory of current and future computer developments closely. Further, if educators communicate their needs and visions well, their ideas will also help lead the development of new computer designs and information processing tools. Since news items come along continuously every year that fit into each of these three categories, educators might challenge their students to make a bulletin board for each of these three areas (processing, memory and input/out devices such as longer term storage) with pictures and news of technologies that they discover through the year. There are two key races underway: the cheapest, smallest, lightest device that can be made; and the fastest computer in the world, irrespective of how much space it takes up (think football fields in size). As these developments are followed, teachers should ask students to look for trends in what they are reporting.

Components:The CPU |

|

|

Image courtesy of LBNL |

Speed thrills. Portability sells. These ideas represent two major branches of development in the design of computers and their CPUs.

Computer speed is driven by the CPU, the heart of computers. The Central Processing Unit is the center of a personal computer's operations. The collection of all the transistors needed to carry out CPU functions on a single chip is referred to as a microprocessor (How Things Work, 2010) or processor. The CPU processes collections of bits called bytes. A bit can be thought of as a single light bulb, on or off, a one or zero. A byte is the number of switches or bits that the CPU can receive from RAM/ROM at one time and consider in its calculations in one operation. Like working with some kind of visual morse code, visualize looking at a row of light bulbs, some on and some off, and making a decision about what to do next from that pattern. Remember that famous poem about the midnight ride of Paul Revere? He would carry a message of where the British invasion would come by watching lights in a bell tower, "one if by land and two if by sea". Today's Paul Revere like decision maker is buried deep in the heart of your computer and making decisions at billions of times per second while considering a very long byte or row of settings with each decision. Welcome to the magic!

The most current models of personal computer CPU's have a 64 bit byte but 8 byte to 32 byte microprocessors can be found everywhere, from elevators to bread makers to car engines. The CPU is sufficiently generalized to handle all computing tasks, but such generalization sometimes requires specialized processing units such as GPUs (graphics processing units) to support devices which include video graphics. Both Intel and AMD announced hybrid CPU-GPUs for 2011 that lower power requirements and improve image handling, which will have special value for handheld mobile devices.

The speed of a computer can be measured in different ways. One measure of speed is the CPU's clock cycle, or how many times per second the CPU can give out instructions with what to do with a set of bits. Processing one set of bits, is one clock cycle, one hertz. For a long time this was measured in millions of times per second (megahertz rate), and currently in billions (gigahertz rate). From the 1974 to 2000, most computers were measured by megahertz speeds. A CPU that runs at one megahertz would complete one million operations per second. By the end of the year 2002, 2 gigahertz (2 billion operations per second) systems were common. Though single CPU's pushed out to 3 gigahertz speeds they generated too much heat, which led to a redesign with multiple CPU's or multiple cores carrying out tasks in parallel, currently ranging from dual-core to quad core design. Note the transition in vocabulary and meaning as the CPU (also called a microprocessor) becomes a "core", a CPU that is bundled together as a set of cores. For example, a leading manufacturer of microprocessors, Intel, announced its Core i7 quad-core products on November 17, 2008 (Wikipedia, 2010) whose 4 integrated CPUs each run in the 2 to 3 gigahertz range (Wikipedia, 2010). Perhaps some day there with be a CPU for every application running in the computer.

Measures of the computer's speed by how fast its heart can beat can be a misleading measure of a computer's power. A fast heart rate does not mean you are a fast runner and the same is true of computers.  A slightly better measure, subject to the same conveyer belt integration problem, is to test the computer's ability to do something basic but useful in the real world such as add two decimal based numbers together. This is known as a floating point operation, a FLOP. Comparing hertz to flop rates is not always useful but a Core2 Quad processor from Intel could run at 70 gigaflops (Wikianswers, 2010), 70 billion additions per second. Keeping up with the rapid evolution in computer speeds requires some knowledge of the sequence of some prefixes for bytes and flops. For example: megaflop= a million floating point operations; gigaflop=billion; teraflop=trillion; petaflop= a thousand teraflops or quadrillion; and exaflop=a thousand petaflops or sextillion. On June 15, 2011 the computer chip manufacturer AMD announced a chip processor that handled 400 gigaflops in a laptop computer and promised 10 teraflop speeds in a laptop weighing a couple of pounds within eight years (Myslewski, 2011^). On November 15, 2011, Intel announced the Knight's Corner co-processor chip (image on right) that contains 50 cores (actual independent processors) with a speed of 1 teraflop (Barak, 2011^).

A slightly better measure, subject to the same conveyer belt integration problem, is to test the computer's ability to do something basic but useful in the real world such as add two decimal based numbers together. This is known as a floating point operation, a FLOP. Comparing hertz to flop rates is not always useful but a Core2 Quad processor from Intel could run at 70 gigaflops (Wikianswers, 2010), 70 billion additions per second. Keeping up with the rapid evolution in computer speeds requires some knowledge of the sequence of some prefixes for bytes and flops. For example: megaflop= a million floating point operations; gigaflop=billion; teraflop=trillion; petaflop= a thousand teraflops or quadrillion; and exaflop=a thousand petaflops or sextillion. On June 15, 2011 the computer chip manufacturer AMD announced a chip processor that handled 400 gigaflops in a laptop computer and promised 10 teraflop speeds in a laptop weighing a couple of pounds within eight years (Myslewski, 2011^). On November 15, 2011, Intel announced the Knight's Corner co-processor chip (image on right) that contains 50 cores (actual independent processors) with a speed of 1 teraflop (Barak, 2011^).

Even though our personal computers are going through rapid increases in speed, they are hardly the fastest computing devices around. In the same way that CPU's can be bundled together in one co-processor chip to improve speed, these CPU chips (whether single or multi-processor cores) can be clustered together in a tight network of machines operating in parallel. The fastest computers in the world are not personal computers, but immense room-sized collections of thousands of CPUs called supercomputers. Astonishingly, their rate of improvement in speed is much faster than the rate of change in personal computers over the decades. According to Kirk Skaugen, vice president and general manager of Intel's Data Center and Connected System Group, "(T)he largest supercomputers in the world . . .have actually been growing at about two times Moore's law" (McMillan, 2012^). If you've ever searched Google or ordered something from Amazon.com, your personal computer may have been communicating with a supercomputer. Beyond science and government use, supercomputers are increasingly the backbone of online businesses. For example, Amazon.com and Microsoft's Azure rent out their spare supercomputer space to thousands of other businesses and Web operations with its the cloud computing utility services (Takahashi, 2010^) . Though the price fluctuates, Google was happy to loan out the 770,000 cores of its Compute Engine supercomputer in 2012 at a rate of $87,000 an hour (Anthony, 2012^), though it would only charge for the fractions of a second needed to solve your problem.

Different companies in different parts of the world have been playing a game of competitive leap-frog, with ongoing announcements of the "newest and fastest" supercomputer for decades. The first 1 megaflop computer was the CDC 6600 that was the world's fastest from 1964-1969. The first gigaflop computer was the CRAY-1, first achieving gigaflop performance in 1985. The first 1 teraflop supercomputer (shown on the left) was Intel's ASCII Red ("ASCII Red", 2011^) that first became operational in September of 1997 and was used by the Department of Defense from 1997 to 2005. To achieve such speed, the system required 72 cabinets of servers. As of 2011, 14 years later, Intel matched the speed of the entire room full of 72 cabinets of machines with a single co-processor chip, Knight's Corner, using vastly lower power consumption to achieve 1 teraflop.

Different companies in different parts of the world have been playing a game of competitive leap-frog, with ongoing announcements of the "newest and fastest" supercomputer for decades. The first 1 megaflop computer was the CDC 6600 that was the world's fastest from 1964-1969. The first gigaflop computer was the CRAY-1, first achieving gigaflop performance in 1985. The first 1 teraflop supercomputer (shown on the left) was Intel's ASCII Red ("ASCII Red", 2011^) that first became operational in September of 1997 and was used by the Department of Defense from 1997 to 2005. To achieve such speed, the system required 72 cabinets of servers. As of 2011, 14 years later, Intel matched the speed of the entire room full of 72 cabinets of machines with a single co-processor chip, Knight's Corner, using vastly lower power consumption to achieve 1 teraflop.

The first 10 teraflop computer was a 200,000 pound computer used in US Department of Energy's Lawrence Livermore Labs in 2001 (Myslewski, 2011^). The world's

fastest computer in July 2002 was a supercomputer in Yokohama,  Japan that ran at over

35 trillion floating point operations per second (35.86 teraflops) for the Earth Simulator

Project which does climate modeling, merging the work of over 5,000 integrated

CPUs. On March 24, 2005, the IBM computer called Blue Gene

ran at 135.5 Tflops, harnessed over 32,000 sub-computers (nodes), and was

designed for continuous improvements in speed. In December of 2010, the fastest was the Chinese supercomputer Tianhe-1A that achieved 2.67 petaflops (a thousand teraflops), which uses 14,000 Intel Xeon 5670-series x86 processors and 7,000 nVidia Tesla GPUs (graphic processor units) (Perlow, 2010^). Intel announced the summer 2011 installation of the Blue Waters computer which will use photonic components (light replacing electrons), run 4 times faster than the current Tianhe-1A and has set its goal on exaflop (a thousand petaflops) computing speeds by 2020 or sooner, and then the project was canceled. On June 20, 2011 the Riken Advanced Institute for Computational Science in Japan announced the Kei Supercomputer (see the clickable picture in this paragraph for more images and information), an 8.2 petaflops supercomputer made up of 68,000 processors contained in 672 cabinets with an electrical and maintenance bill of 10 million dollars a year. The Oak Ridge National Laboratory in Oak Ridge, Tennessee, announced the construction of the Titan, 18,000 Tesla GPUs based on Nvidia's next-generation Kepler processor architecture, with speeds up to 20 petaflops (Montalbano, 2011^). As of November 14, 2012 it had achieved 27 petaflops making it the current champ, a status which no supercomputer system now seems to be able to keep for more than a few months. The next big target is exaflop speed, a thousand petaflops, that may be achieved in a few years.

Japan that ran at over

35 trillion floating point operations per second (35.86 teraflops) for the Earth Simulator

Project which does climate modeling, merging the work of over 5,000 integrated

CPUs. On March 24, 2005, the IBM computer called Blue Gene

ran at 135.5 Tflops, harnessed over 32,000 sub-computers (nodes), and was

designed for continuous improvements in speed. In December of 2010, the fastest was the Chinese supercomputer Tianhe-1A that achieved 2.67 petaflops (a thousand teraflops), which uses 14,000 Intel Xeon 5670-series x86 processors and 7,000 nVidia Tesla GPUs (graphic processor units) (Perlow, 2010^). Intel announced the summer 2011 installation of the Blue Waters computer which will use photonic components (light replacing electrons), run 4 times faster than the current Tianhe-1A and has set its goal on exaflop (a thousand petaflops) computing speeds by 2020 or sooner, and then the project was canceled. On June 20, 2011 the Riken Advanced Institute for Computational Science in Japan announced the Kei Supercomputer (see the clickable picture in this paragraph for more images and information), an 8.2 petaflops supercomputer made up of 68,000 processors contained in 672 cabinets with an electrical and maintenance bill of 10 million dollars a year. The Oak Ridge National Laboratory in Oak Ridge, Tennessee, announced the construction of the Titan, 18,000 Tesla GPUs based on Nvidia's next-generation Kepler processor architecture, with speeds up to 20 petaflops (Montalbano, 2011^). As of November 14, 2012 it had achieved 27 petaflops making it the current champ, a status which no supercomputer system now seems to be able to keep for more than a few months. The next big target is exaflop speed, a thousand petaflops, that may be achieved in a few years.

More details on other supercomputers can be found at the top 500 site. The term supercomputer is a highly relative term referring to the fastest computers available on the planet in any given year. Numerous pictures of supercomputers are available online. These pictures indicate that the room size computer or computer complex has never really gone away. Today's supercomputers still need even more of the space and cooling equipment than the original room sized ENIAC computer did in the 1940's.

In spite of their rapid growth in capacity, today's computers based on electrons will one day become the dinosaurs of the early history of computer technology. Thought of another way, today's era of electronic or electron-driven computers is like the era of the steam engine in the history of the automobile, which came before today's internal combustion engines. The next generation of computers could be based on photons (light beams) and run hundreds of times faster than today's fastest computers and be cheaper to build. The Rocky Mountain Research Center announced the first photonic transistors in 1989 and received the first U.S. Patent for such in 1992 (Docstoc, 2010^). IBM's Blue Waters supercomputer is based in part on photonic transistors. After photonic or light beam transistors, work is underway on nanotechnology, with the first precision control of a single atom transistor announced on February 19, 2012 (Fuechsle et al., 2012^). Computers based on quantum mechanics, quantum computing, are also making progress (Metz, 2012^; Tuttle, 2012^). Such developments offer the hope in twenty years of computers a billion times faster than today's. One could surmise from these developments alone that the next fifty years of computer technology will be as dramatic as the first.

On the one hand, the steadily increasing speed of supercomputers is the result of the steady shrinking of the size of transistors that hold the bits, logic and data of a computing device. This has allowed ever more CPUs to be clustered together at an ever cheaper price and shared over computer networks. Many problems require supercomputer speed. On the other hand, many problems require the more immediate availability of a personal computer that is not shared with anyone, yet can network with anyone and any other computer as needed. From the Osborne portables of the 1980's that were the size of sewing machines to laptops to handheld smartphones, the personal computer continues to shrink the size, weight and cost of the device that gives us immediate access to a CPU.

There is also another way to think about the relationship between basketball floor sized supercomputer collections of CPUs and the multicore computing devices in our pockets and shoulder bags. Today's smartphone is yesterday's supercomputer. From 1985 to 1990 the Cray 2 was the fastest computer in the world operating at around 1.9 gigaflops, which is in the speed range of today's smartphones. That is, any year in which we buy a new smartphone (e.g., Optimus G, 2012) we are buying the CPU power of a supercomputer from approximately 25 years before that accesses current supercomputers for specific needs. Given the additional networking power of that device in our pocket and the range of possible software applications, it is not an exaggeration to claim that the device we carry has provided considerably more power than the vintage supercomputer. Further, given the narrow range of what smartphones and even the newest supercomputers can actually do well, you are likely to be walking around with two supercomputers. The battery fueled and air-cooled device is in your pocket and the smarter liquid fueled and cooled device is on your shoulders. How can we help the knowledge and skills required for each to expand the capacity of the other?

Components: RAM/ROM- computer memory |

|

|

Image courtesy of NASA |

Most computers today use also computer chips for active computer memory, thumbnail size wafers of silicon or other substances that come in two distinct species, digital and analog. Computers communicate within themselves and with other computers using the digital 1's and 0's. To interact with the world around them they need analog chips that can deal with continuous states of information that translate back and forth between the analog and digital environments.

Memory chips make up the second major part of a computer, and play a very different role than the CPU chip. Memory chips provide high-speed data storage of information for the CPU. Computer memory contains many digital chips which are packed with small switches representing a condition of on or off or a 1 or a 0. Many different techniques and chemical structures are used to make this concept work. Computer "chips" made out of silicon are currently used to manage the state of these switches. Silicon is one common substance used to create computer memory, but it was not the first, and is certainly not the last. For example, serious work is being done on using the chemical structure of proteins to create computer memory. Biochips are in your future.

Computer chips are designed to serve several different kinds of memory needs. Though RAM and ROM are the most common forms of computer memory chips, there are other forms of which EPROM is one example.

When purchasing additional RAM for a computer, the computer manual

that ships with your computer will tell you which kind you need. These

chips might be titled SIMMs or DIMMs chips and new types will emerge. Over

the long term RAM prices have dropped steadily, but over a period of months,

the price fluctuates considerably in high and low cycles.

Bytes are discussed in different size units: kilobyte = 1000 bytes (K or KB); megabyte = million bytes (M or MB); gigabyte = billion bytes (GB) ; then terabyte = trillion bytes (TB). This is then followed on up the scale by petabyte, exabyte, zettabyte, and yottabyte. We are in a transition of moving from gigabytes to terabyte of RAM. For someone whose first computer contained 16K of RAM nearly twenty-five years ago, I still find this astonishing.

Optional Readings:

Your skin contains millions of sensors that detect temperature, touch and more. A desktop computer might contain one that that controls the cooling fan. Current computer systems are ages behind biological systems in integrating sensors. The simple limited two state range of digital chips continued to work well as the size of components shrunk and shrunk. Unfortunately, it has been the case that analog circuits get worse as they shrink.

It wasn't until the 1990's that a process was developed to deal with this problem of analog miniaturization. "The process is modeled on how the human brain adjusts the nerve cells. Called "self-adaptive silicon" technology, it can monitor the chip's functioning and reset it to adapt to changes in temperature or battery power. ...Impinj's analog circuits are simple to design because they self-tune, are small because the transistors themselves compensate for mismatch and degradations, and (because they) learn from their inputs" (Frishberg, 2002^). Bill Colleran is CEO of Impinj whose patents are based on the work done at Cal Tech by Carver Mead, and his former student, Christopher Diorio.

Analog chips are not exclusively analog, but rather also have digital chip components. For example, a wave of sound enters the mouth piece of a cell phone and an analog chip converts the sound to digits, ones and zeros, which are then transmitted to another cell phone whose digital chips must pass it to analog chips which create the sound the listeners hear.

Components:I/O (Input/ Output devices) |

|

|

Image courtesy of NIH |

Here are some examples:

Can you think of other examples that fit in these three categories of I/O?

Some I/O topics are so important that they require further detail.

If...you...talk...with...pauses...between...your words, computers have been able to understand human speech since the early 1970's. But no one wants to talk like that, at... least...for...very...long. The goal has always been to enable computers to understand our continuous speech. There are no pauses between our words when we talk normally. The sounds of a sequence of words are more like a fluid than a series of sound bites. The brain reasons it out on the fly. Now, vendors say that they have "fluidic" speech recognition working reasonably well. Both Macintosh and Windows have included a speech recognition feature for single word commands. They promiseed that if you can talk, the computer can type it in as you say it. This development was followed on smartphones with better fluidic capacity with products named Siri (Apple iOS) and voice recognition in the Droid smartphone software.

To search for even more recent news, use these search phrases: fluidic speech; continuous speech; voice recognition; speech recognition; speech analysis.

For more information see Wikipedia's speech synthesis page and speech synthesis or speech generation search items.

There are a number of pros and cons to digital books, but given the number of sales of highly mobile devices with book reader software, the option to use one or the other is becoming very widespread. Pros: digital books can be quickly updated and redistributed; readers can easily search for a specific passage; publishers could save money by ending the use of paper; special options are possible for note taking and information organizing within the book itself; creators could include more charts and images and raw data that is often eliminated now due to printing costs; readers can carry vast quantities of information, large libraries of books and articles; no shipping costs for book delivery because the book is delivered via the modem built-in to the device; allows rapid distribution of books including many thousands of books no longer under copyright protection and now online. Cons: putting books online speeds book piracy; it becomes more difficult to track the more frequent revisions possible with electronic technology; legibility is still not the equal of paper; too often the digital book costs as more or more than paper books.

Optional readings:

Some forms of electronic ink can be printed on almost any surface, from billboards to flexible sheets of paper. Xerox says that its Gyricon product is, "...a rubberized, reflective substance made entirely of microscopic beads that are half black, half white. The Gyricon material is laminated between two sheets of plastic or glass and has the thickness of about four sheets of traditional paper. Before it can be used, Gyricon is filled like a sponge with oil. This creates a cavity for each bead, allowing it to rotate."

Optional readings:

Forget the book (which is a hand-held display), and just wear TV screens with your glasses. The standard for measurement in comparing the wearable devices that is emerging is the 2 meter standard. That is the width of view of different HMDs provides the perception of viewing a wide-screen TV with a certain width from two meters. The horizontal widths of 42, 52, and 62 inches are currently (January 2001) being advertised. Though Eye-trek is currently designed for standard video displays such as those from television, DVDs and VCRs, high definition television and computer display will not be long in coming. By comparison with computer screens, these HMDs have slightly better than a 800 by 600 pixel resolution. Visit the home pages of these companies to see people wearing these products.

Such technology would allow confidential personal computer display. This would also allow a wider range of computer applications to be integrated into hand-held and smaller computers. These devices also can provide the perception of being "in the scene" instead of viewing the scene from a more distant and removed perspective, an experience also called immersive display capability. Immersive capacity currently has immediate application in architecture, surgery and entertainment such as gaming and movies. It remains to be seen when such displays will be considered highly competitive with quality computer monitors or even the high quality of printed pages.

Optional readings:

One critical part of computer development is mass storage, the storage of the computer's bits that cannot be permanently kept in computer memory and the residence for those bits when electrical power is turned off. Today's mass storage systems (diskettes, hard drives, ZIP drives, CDs, DVDs) that hold our applications and data when electrical power stops are based on spinning motors. In the long term, anything with a spinning motor in it is likely to become obsolete as new forms of memory are developed. Spinning motors are a major part of the weight of laptop computers, have an intense need for electricity and a fairly high potential for failure or breakage, especially if moved or bumped while being used. In the meantime, they are essential to the expanding needs for computer storage. Mass storage is generally broken into two categories: fixed and removable. Fixed data storage refers to technology like the hard drive of the computer that stays put in the computer when its users goes elsewhere, often putting the information on a floppy diskette for transfer elsewhere.

Fixed Data Storage

Hard drive prices have dropped quickly over the decades. This drop has even been much faster than the drop in prices and increase in capacity of computer chips. In the mid-1980's, 10 MB (millions of bytes) cost around $1,000. The largest consumer hard drive as of November 2000 was an 80 GB drive that sold for around $400.00 and that capacity doubled the following year for roughly the same price. By 2003, 60 GB drives were selling for $100. Going from 10 MB to 60 GB in storage capacity over 20 years represented a 60,000 fold drop in mass storage prices (Delong, 2003). In 2005, 500 GB drives sold in the $400 range. At the same time, these hard drives have become significantly faster. Writeable and erasable CDs and DVDs are also becoming common. As DVDs have far greater capacity than any other form of removable data storage, they represent the clear winner over the next two or three years for removable storage. It is reasonable to expect the current DVD capacity of 8.6 gigabytes per side to grow to 30 gigabytes or 60 hours of VHS video on one side. Multimedia developments such as hi-resolution imagery and digital audio and video require ever larger capacity disk drives.

Based on current trends, it is reasonable to expect a terabyte of data storage for $100 in another few years while systems with a thousand terabytes (a petabyte) and more will be not be unusual. As just one example, this will bring unprecedented developments to the concept of diary and autobiography as people strive to store every waking moment of their lives (Piquepaille, 2003). Adam Couture, an analyst at Gartner in Connecticut, predicts worldwide storage capacity will "swell from 283,000 terabytes in 2000 to more than 5 million terabytes by 2005" (Hildebrand, 2002^). The latter 5 million number is also equal to 5,000 petabytes or 5 exabytes.

Mobile Data Storage

Removable data storage is undergoing numerous changes in media. Floppy diskettes haveg become extinct. They hold too little and transfer data too slowly for the larger files increasingly in use. Apple Computer stopped making them a standard part of computers in 1998. Dell Computer decided in 2003 to follow Apple's lead and no longer puts them in all new computers (Casciani, 2003^) unless requested.

There are other more effective competitors for mobile mass storage. Removable "memory cards" are rapidly closing the gap with motor driven memory. Gigabyte memory chips have recently come available for use in digital still cameras and it is only a matter of time before general computers acquire the capacity to use them as well. Chips manufacturers are estimating that by 2005 the memory cards will hold 10 gigabytes of memory and become the primary form of storage replacing floppy diskettes, CDs, DVDs and ZIP disks. Sets of these memory cards could replace high capacity hard drives too. Removable memory cards are the eventual death knell of spinning motors in computers whose spinning drives are destined to become the dinosaurs of mass storage.

|

|

| Image courtesy of Thumbdrive. | Image courtesy of http://www.futurelooks.com/ |

In terms of cost, they are increasingly competitive with other media but are not yet cheaper per megabyte. For example, a 32 MB flash drive could be bought for as low as $25 in December of 2002. It would take 23 floppy diskettes to provide the same storage capacity and if you can find the diskettes for fifty cents a disk, those 23 diskettes will cost $11.50. Of course, it is much easier to carry around one flash drive than 23 diskettes and the speed at which USB information is transferred is much faster than diskettes. A single 100 MB ZIP drive cartridge can be found for $8.00 but a 128 MB flash drive has a lowest price of $79 (January, 2003). Overall these chip drive prices appear to be dropping in half every six months, so that at some point, flash drives will become the cheapest. To keep up with the latest in low prices, try the phrases "keychain drives" or "USB Flash drives."

USB drive technology continues to improve. The first drives were in the version of USB 1.1 which provides a great speed improvement over floppy diskettes. The new version of the flash drives uses the USB 2.0 design which is some 12 times faster. The speed of these USB devices will continue to increase to their technical limit in the years ahead. The technical top speed is some 60 MB of data per second, which is faster than the fastest drives of any type in common use in the year 2003.

DVD and CD burners make a third category of common mobile storage. DVD prices have dropped to the point that the demise of CD burning technology is not far off, given that DVD holds much more data. Where CD technology holds a maximum of 700 MB, DVD capacity has been in the 5-10 gigabyte range using the current red laser technology. Blue laser technology formats have been approved by the international standards consortium for DVD and will hold 15 to 20 gigabytes of data, which at the maximum capacity of this next generation means that some 3 hours of high definition television could be stored on one side. Companies have predicted such technology will begin to appear in computer systems in 2005 (Associated Press, 2003^).

It should be noted that for most file sizes in use for standard word processing documents and still images, sending files to oneself or others as email attachments, bypasses the need for removable data storage devices. Having a personal network storage account, such as for a web site, allows even larger files to be stored on the network for later use, though large-size file storage generally means having access to high speed networks so that they data can be transferred as fast as using removable media. With large files, what takes just minutes for data transfer with removable data storage could takes many hours with slow network access.

Researchers see even greater capacities in future years by moving to organic compounds, including novel plastics. One line of research is pursuing non-erasable technologies, fine for family pictures and corporate data storage, both situations in which the users do not want data erased. Another line of research will be erasable. Both approaches suggest that simple production lines will roll out plastic sheets that can be stacked for dense memory, instead of the environmentally controlled and therefore expensive settings needed for chip production (Eisenberg, 2003^).

Users connect to the Internet in a wide variety of ways, both hardwired and wireless. The speed at which these users connect is a key measure of Internet capacity and usefulness. This speed has increased significantly over the years, moving data at ever faster paces.

The speed at which computers communicate is measured in bits of information per second. In 1998, the typical device used at home allowed a computer to trade data with other computers using a modem that had a maximum capacity of 56 KB (56,000 bits per second or bps). The term modem is a contraction of the concepts of modulation-demodulation. To modulate your data is to transfer to the state of a bit (on or off) to a wave of sound that can go down a telephone line or wireless signal. At the other end the sound is demodulated, converted from sound to a bit or switch in the computer which is either on or off. A decade later DSL and cable modems brought the speed to 1 megabit (1 million bits per second) and higher for home use and gigabit speeds (billions of bits per second) for institutions that could pay for the greater speeds. The 4G wireless networks being employed by wireless telephone carriers in 2012 reached 2 megabit (MB) to 40 MB speeds. Direct connects with wires however has delivered much higher speeds. If glass fiber lines that use lasers instead of copper lines with electrons, average top speeds have reached 20-40 gigabit and much higher.

Cloud Data Storage

Given the high speeds of modern networks, it became feasible to store information on the network outside of the computer's directly connected hard drives. Cloud computing is a marketing term, a metaphor for storing information somewhere on the Internet or global computer network. The concept of the term "cloud" is meant to communicate that the user does not need to know where the data is stored physically. It doesn't matter; "your data is stored in the cloud". It is about providing access to computing power as a service, not a product, like water and electricity. The actual location is handled by the company providing the cloud service. You don't ask where your electricity is coming from; you just want to buy and have it delivered to where you need it. The same concept is being applied to cloud computing.

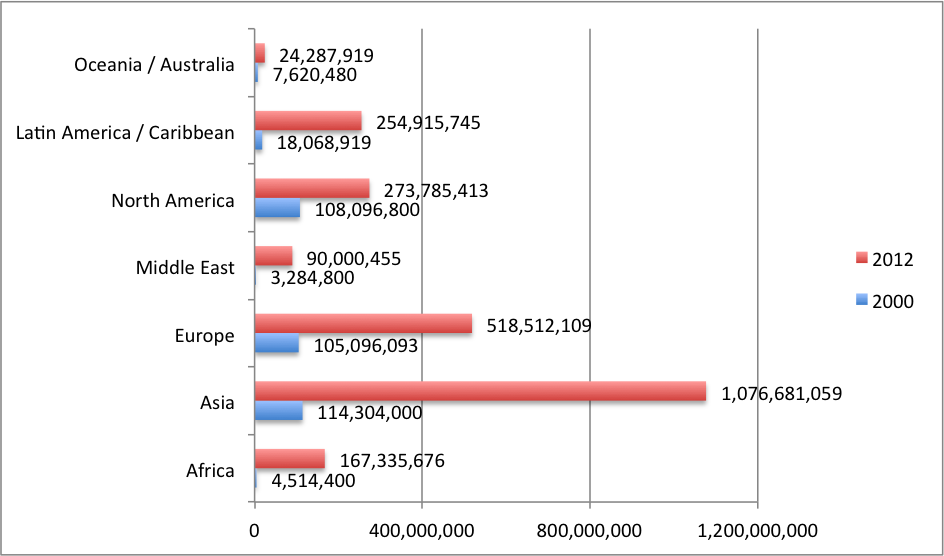

All of the previously discussed I/O features enhance our personal computers. Networking pushes beyond the power of one individual computer, and in that push all kinds of additional "magic" have occurred. Perhaps the most significant I/O feature of computers is this capacity to network, to interact by trading data with each other which has created enormous capacity for the citizens of the world to team and add value to our cultural systems. As a result the number of Internet users doubled from 2002-2007 and the doubled again from 2007-2012 ("World", 2012^). The further nature of this development is shown in the charts and graphs below. This networking I/O feature adds the staggering capacity of the world's computer systems to our personal devices. The importance of this development deserves a deeper look.

The global scene has been changing rapidly over the last decade or so. Note the significant differences in growth in the number of users between the two sets of data in columns C & D. This data is from the World Internet Stats site.

.

The rapidity with which use of the Internet exploded over a longer period, the 2000-2012 time period, can perhaps be better seen by putting this data in an Excel bar graph.

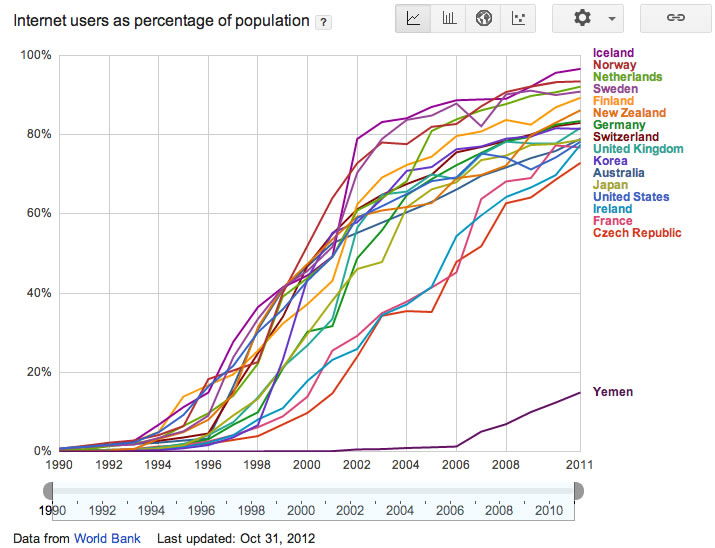

Looked at country-by-country in the graph below, a different perspective emerges. Low income countries, using economically challenged Yemen for example, are only just beginning their Net growth, but countries that were already leading in industrial age activity are rapidly nearing 100% of their citizens accessing the Net. This growth is transforming the way businesses and services are managed and organized in these leading countries. This graph is intended to show an overall growth pattern among the countries with the wealth to invest in the Net more heavily. To isolate the data for individual countries, use Google's Public Data charting features for this graph.

The evolution of the number of people online or accessible through the Internet and the World Wide Web is an important statistic for both educators and entrepreneurs (business and non-profit creators). These users have served as a foundation or infrastructure for the more complex developments of ecommerce (online business transactions) and social Web developments for numerous concerns. Between 2000 and 2010, the percentage of manufacturing shipments in the United States that were handled through ecommerce, rose from 18% to 46% of all manfacturing shipments, almost tripling. Though still a tiny percent of the overall retail trade, online retail marketing and sales quadrupled from .9 to 4%, representing some 145 billion in sales (U.S. Census Bureau, 2012^). The Department of Commerce has put the figure even higher at 165 billion (Enright, 2011^), a growth rate of 20% a year that shows every sign of continuing.

Networking though is also about what can be created within the network, not just about how many are networking and how much money is being made on how much product that is being shipped. Over the twenty years from 1990 onward, ever higher quality and more sophisticated network designs have been emerging. Though there are numerous social systems, the phone was really the first social technology which transitioned to the basic cell phone, the world's first consumer computer terminal, a not-so-smart phone that was capable of sending text messages through the computer systems of the phone companies to other not-so-smart phones that began with the first text message December 3, 1992 and twenty years later was globally handling some 7 trillion text messages a day (Newman, 2012^). These phone company systems are in turn transitioning to computer based text messaging systems such as Twitter, Facebook, and Apple and Google smartphones which all have their own internal messaging systems. Almost half the world's 2.27 billion Internet users ("World", 2012^) have Facebook accounts for sharing social media including text, images, sound and video, 1.1 billion accounts. Some 600 million Facebook users are active every day ("Number", 2012^). YouTube grew from its founding in a garage in 2005 to 2012 and the uploading of 60 hours of video every minute "YouTube", 2012) with over 4 billion videos viewed a day (Oreskovic, 2012^); by 2012 Google was using speech recognition technology to automatically translate YouTube speech and captions into 51 languages and localized to native languages in 22 countries covering 24 languages ("YouTube Facts", 2010^). Flickr went from its founding in 2004 to uploading over 40 million images every month in 2012 (Michel, 2012^) while Facebook uploads over 300 million photos a day in 2012 and added new features to accelerate that rate for 2013 (Constine, 2012^). These companies led the way in providing the foundation, models and fuel for further developments.

Now that such large numbers of people are actively and comfortably using cyberspace in so many ways, Kahn Academy has in turn built on these stepping stones to create the foundations of a free online school that was founded in 2009. By April of 2012 Kahn Academy's Web site, using the freely available YouTube site for its tutorial videos, had delivered some 140 million+ lessons in that short period of time. Further, 500 million+ practice exercises were completed; the system had had 6+ million users per month (Sheninger, 2012^). This in turn has contributed to a debate on the lessons to be learned from the Kahn Academy experiment and that will undoubtably lead to new and better models.that build on computer networking capacity (Seninger; Talbert, 2012^).

Part of the point here in giving such attention to networking is that the rapid growth and utilization of computer networking I/O drives the further use of all of the parts of a computer and the need to know more about the features and options of each. Such growth is also a reasonably good measure of the growing need for greater digital literacy and for greater capacity to participate in the new economic and other cultural developments in cyberspace. If a single Wall Street hedge fund investor can out innovate the world of education and educational publishers with a webcam, a laptop, free YouTube and networked computers, what will happen when an ever larger percentage of the world's citizens reaches high levels of digital literacy?

Many designs reflect the effort for the biggest. Designers combine as many mass storage units, memory chips and ever faster CPUs into one system. Supercomputers and massively parallel computers are one result of this line of thought. The World Wide Web and the Internet with its billions of interconnected computers is another. Other rapid developments such as designing the capacity to compose and display or use the most kinds of media have almost leveled off.

The other approach is to see what the smallest devices are that can still do something useful. Shrinking the size of components allows engineers to recombine the three basic components of computers (CPU, RAM/ROM, and I/O) in novel ways. Palm or pocket computers have been one step along this line of thinking. The designs of these Personal Digital Assistants (PDAs) have converged with cell phones, creating products that some have called Smart phones. Using the same devices, users make telephone calls and make searches with a web browser. Further, this device automatically recognizes the available wireless signals, using wireless Internet signals or cell phone towers for calls which ever is better.

One of the more personal developments at the edge of change is the concept of wearable computers and wearable computer networks. The trend began with watches and is expanding across our wardrobes. "A person's computer should be worn, much as eyeglasses or clothing are worn, and interact with the user based on the context of the situation. With heads-up displays, unobtrusive input devices, personal wireless local area networks, and a host of other context sensing and communication tools, the wearable computer can act as an intelligent assistant, whether it be through a Remembrance Agent, augmented reality, or intellectual collectives" (http://www.media.mit.edu/wearables/, online June 30, 2003).

It is not that big a step to envision our glasses (switchable as heads-up display), watch (providing numerous sensors reporting on our well being as well as reporting on conditions in our surroundings such as location, barometric pressure or altitude), PDA and cell phone in wireless communication with each other and other wireless networks, all at our command.

As components shrink, even more options will be considered by computer and textile engineers to solve problems for our global culture and personal lifestyles.

Optional reading:

---- (August 6, 2003). Boston Public School District Using Unique Mobile/Wearable

Computers. eSchoolNews Online. http://www.eschoolnews.com/resources/partners/showrelease.cfm?ReleaseID=362.

Educators must also be knowledgeable about the educational values of current and coming innovations in technology and how they fit into those trends. This includes three major areas of a computer: the CPU; computer memory (RAM/ROM, etc.); and I/O, especially mass storage and communication between the computer and other devices. The shrinking of both the size and cost of computers will continue as the most visible measurements of the change in computer technology which impacts its educational integration, but not the only ones.

In this fourth of the ages of computing, the computer has three major parts or divisions that each grow and accelerate their capacity as new designs for their components appear. How should educators balance the teaching of four generations of thinking technology, integrate planning for the major trends in our fourth generation thinking tools and keep up with the evolution of our digital magic wand?

Lightly touched by this composition is consideration of how to organize learning, curriculum and instruction in light of such trends and 21st century technologies. How can or should we organize our communities and our schools to take maximum advantage? How can the use of these 21st century technologies enhance and accelerate our teaching and learning with even the most basic topics of reading, writing and speaking? That work is of such a scope that it must be left for a separate composition, Communities Resolving Our Problems - the Basic Idea, and book length works that address these ideas in greater detail.

New understanding and new curriculum will inevitably emerge. It should now be clearer that in an age of accelerating change, educators have a tremendous opportunity and obligation to dig deeply into the numerous possibilities of fourth generation or computing technologies for problem discovery and problem solving. Further, 4th generation technologies should be used to revitalize attention with the first three generations. However, our overwhelming attention to our astonishment with 4th generation thinking technology may keep us from seeing a still larger picture. With time, we may need to return to the thought that the real computer is still a human being, that digital technology is merely one more new ally for the real computer, and that this digital ally has evolved far beyond its use in scientific and mathematical calculation. For in fact, the digital technology embedded in our desktop and other computer systems does not think and will not think with the human richness that should be associated with that term. Human beings, not computers, must be the hub around which we balance our needs for digital and analog thinking. Put in another way, the specialized support for thinking that digital computers provide is but one more important element on the palette for the more wide ranging and more significant nature of human thought.

ASCII Red (2011). Wikipedia. http://en.wikipedia.org/wiki/ASCI_Red

Anthony, S. (2012 June 28). Google Compute Engine: For $2 million/day, your company can run the third fastest supercomputer in the world. ExtremeTech. http://www.extremetech.com/extreme/131962-google-compute-engine-for-2-millionday-your-company-can-run-the-third-fastest-supercomputer-in-the-world

Barak, S. (2011, November 16). Video: Intel's Knight's Corner, EE Time, http://www.eetimes.com/electronics-news/4230708/Exclusive-Video--Intel-s-Knight-s-Corner#68553

Constine, J. (2012, December 1). Facebook Makes A Huge Data Grab By Aggressively Promoting Photo Sync. http://techcrunch.com/2012/12/01/facebook-photo-sync-data/

Enright, A. (2011, February 11). E-commerce sales rise 14.8% in 2010. Internet Retailer. http://www.internetretailer.com/2011/02/17/e-commerce-sales-rise-148-2010

Fuechsle, M., Miwa, J. A., Mahapatra, S., Ryu, H., Lee, S., Warschkow, O., Hollenberg, L. C. L., Klimeck, G. & Simmons, M Y.( 2012/02/19/online). A single-atom transistor. Nature Nanotechnology. http://dx.doi.org/10.1038/nnano.2012.21 http://www.nature.com/nnano/journal/vaop/ncurrent/full/nnano.2012.21.html

Hilbert, M. & López, P. (2011, February 10). The World's Technological Capacity to Store, Communicate, and Compute Information. Science. DOI: 10.1126/science.1200970. Retrieved February 11, 2011 from http://www.sciencemag.org/content/early/2011/02/09/science.1200970

Houghton, R. S. (2011). The knowledge society: How can teachers surf its tsunamis in data storage, communication and processing? http://www.wcu.edu/ceap/houghton/readings/tech-trend_information-explosion.html

McMillan, R. (2012, January 24). Intel Sees Exabucks in Supercomputing's Future. Wired Magazine. http://www.wired.com/wiredenterprise/2012/01/supercomputings-future/

Metz, C. (2012, February, 28). IBM Busts Record for 'Superconducting' Quantum Computer. Wired Magazine.|http://www.wired.com/wiredenterprise/2012/02/ibm-quantum-milestone/

Michel, F. (2012). How many photos are uploaded to Flickr every day and every month? http://www.flickr.com/photos/franckmichel/6855169886/

Montalbano, E. (2011, October 11). Oak Ridge Labs Builds Fastest Supercomputer. InformationWeek. http://www.informationweek.com/news/government/enterprise-architecture/231900554

Newman, J. (2012, December 3). Text messaging turns 20, but don't call it ready for retirement. PC World. http://www.pcworld.com/article/2018289/text-messaging-turns-20-but-dont-call-it-ready-for-retirement.html

Number of active users at Facebook over the years. (2012). Associated Press. http://finance.yahoo.com/news/number-active-users-facebook-over-years-214600186--finance.html

Oreskovic, A. (2012, January 23). "YouTube hits 4 billion daily video views". Reuters. Retrieved January 23, 2012.

Sheninger, E. (2012, April 23). Khan Academy: Friend or Foe? http://esheninger.blogspot.com/2012/04/khan-academy-friend-of-foe.html

Talbert, R. (2012, July 3). The trouble with Khan Academy. Chronicles of Higher Education, http://chronicle.com/blognetwork/castingoutnines/2012/07/03/the-trouble-with-khan-academy/

Tuttle, M. (2012, March 21). Quantum Computing Goes Beyond Binary Quantum Bits Come Into Play. WebPro News. http://www.webpronews.com/quantum-computing-beyond-binary-2012-03

U.S. Census Bureau (2012, May 10). 2010 E-commerce Multi-sector Data Tables (Released 2012, May 10). http://www.census.gov/econ/estats/2010/all2010tables.html

YouTube Facts & Figures (history & statistics) http://www.website-monitoring.com/blog/2010/05/17/youtube-facts-and-figures-history-statistics/

World Internet population has doubled in the last 5 years. (2012). Royal Pingdom. http://royal.pingdom.com/2012/04/19/world-internet-population-has-doubled-in-the-last-5-years/